Implementation and Simulation of Sequential Adverse Condition Scenarios for Autonomous Driving

Volume 10, Issue 5, Page No 1–10, 2025

Adv. Sci. Technol. Eng. Syst. J. 10(5), 1–10 (2025);

DOI: 10.25046/aj100501

DOI: 10.25046/aj100501

Keywords: Autonomous Driving, Adverse Conditions, Scenario-based Simulation

Establishing an environment that allows for the quantitative evaluation of the ability of autonomous driving systems to respond to real-world adverse conditions is crucial to ensuring their safety and reliability. This study proposes a dynamic scenario-based simulation framework that simulates complex and sequential hazardous scenarios frequently encountered in actual road environments. The proposed scenarios are implemented based on real-world locations, including the Gwangan Bridge and Sinsundae Underpass in Busan, Republic of Korea, and the Autonomous Vehicle Test Road at Korea Intelligent Automotive Parts Promotion Institute (KIAPI) in Daegu. The proposed framework encompasses various adverse conditions, such as partial or complete loss of global navigation satellite systems (GNSS) signals in underpasses and tunnels, degraded camera and light detection and ranging (LiDAR) sensor performance due to heavy rainfall and dense fog, and blind spot formation caused by surrounding vehicles. A notable feature of the proposed framework is its ability to realize continuous and realistic transitions between different conditions. For example, entering a tunnel and experiencing GNSS signal loss, immediately followed by exposure to heavy rainfall upon exiting the tunnel during regular road driving. The simulated scenarios enable the evaluation of how autonomous driving systems respond to and manage risks in real-world environments.

1. Introduction

Recently, the reliability and safety of the positioning system for autonomous driving systems (ADSs) have emerged as significant research topics in academia and industry. For autonomous vehicles to operate reliably in real-world environments, it is essential to systematically understand sensor performance degradation under various adverse conditions, including the undersides of bridges, tunnels, fog, heavy rain, and blind spots [1,2]. These conditions can degrade the performance of key sensors such as cameras, light detection and ranging (LiDAR), radio detection and ranging (radar), and global navigation satellite systems (GNSS). Critical functionalities, including localization, object detection, path planning, and adaptation to complex environmental factors such as weather, may be severely affected. In practice, adverse weather and environmental changes are frequently identified as major causes of autonomous driving accidents, prompting active research on overcoming these challenges in both autonomous driving technology and simulation studies [3–5].

However, most existing studies have been conducted in idealized or limited environments; thus, these studies do not sufficiently reflect the complexity of real roads or the risks posed by various adverse conditions. Therefore, there is an increasing demand for simulation-based frameworks that can quantitatively reproduce and evaluate realistic adverse conditions [6,7].

This study aims to construct adverse condition scenarios for evaluating the positioning system for ADS and implement these scenarios within the CARLA simulator environment. The scenarios are implemented in a simulation environment that models actual road conditions at the Gwangan Bridge and Sinsundae Underpass in Busan, Republic of Korea, and the autonomous vehicle test road (AVTR) of the Korea Intelligent Automotive Parts Promotion Institute (KIAPI) in Daegu, Republic of Korea. In this study, we implemented five adverse condition scenarios that encompass major adverse factors, including structural conditions such as underpasses and the undersides of bridges, atmospheric conditions such as fog and heavy rainfall, and continuous adverse situations such as blind spots caused by surrounding vehicles. Each scenario was designed to reflect hazardous situations on actual roads by controlling variables such as location and weather. In addition, by modularizing the scenarios through the Python application programming interface (API) and the robot operating system (ROS), reproducibility and scalability were ensured, enabling the scenarios to serve as a standard benchmark for evaluating the safety and reliability of autonomous driving algorithms.

The major contributions of this study are as follows: First, unlike previous works that mainly addressed single or isolated adverse conditions, this study implements sequential transitions between multiple hazards (e.g., GNSS signal loss inside a tunnel immediately followed by heavy rainfall upon exit). This design enables the realistic reproduction of complex risk situations frequently encountered in real-world driving environments. Second, the scenarios were developed based on real road environments such as the Gwangan Bridge and the Sinsundae Underpass in Busan, as well as the Autonomous Vehicle Test Road (AVTR) at KIAPI in Daegu, which is a dedicated real-world proving ground for testing. By combining actual road sections with test-track infrastructure, the framework enhances the realism and applicability of the simulation results. Third, each scenario was modularized using CARLA and ROS, ensuring scalability, reproducibility, and repeatability. This allows the framework to serve as a standard benchmark for evaluating the safety and reliability of positioning systems for ADS under diverse and sequential adverse conditions. Collectively, these contributions distinguish this study from existing scenario-based evaluation frameworks by providing a scalable, reproducible, and realistic platform for systematically analyzing the vulnerabilities of autonomous driving systems in sequential adverse environments.

The remainder of this paper is organized as follows. Section 2 reviews related work. Section 3 describes the scenario-based framework. Section 4 presents the simulation process. Sections 5 and 6 present the discussion and conclusion, respectively.

2. Related Works

Recently, various scenario-based testing frameworks have been proposed to evaluate the safety of the positioning system for ADS.

A study by [8] introduced a scenario-based framework for the safety assessment of the positioning system for ADS using a parameterized scenario library, random sampling, and genetic algorithm-based test case exploration techniques. This approach enables the efficient identification of potentially hazardous situations and facilitates repeated analysis of problematic scenarios via log storage and accident replay functionalities. A study by [9] focused on abnormal situations by implementing corner case scenarios within the CARLA simulator, where 32 different environmental parameters, including weather conditions, could be adjusted. Such approaches have been effectively used to assess the positioning system for ADS operation under extreme conditions. In addition, a study by [10] demonstrated that data-driven hazardous scenarios can be constructed and tested by implementing scenarios based on realistic trajectories, such as lane changes and roundabouts, within the CARLA simulator using actual traffic data. A study by [11] proposed a method that defines scenarios, conducts automated testing in a simulator environment, and links the results to real-world road tests using a formal approach. By connecting scenario generation and simulation based on formal specifications with the analysis of actual track test results, the method establishes a reliable validation framework that bridges virtual and real-world environments. Previous studies have advanced various methodologies for the multifaceted analysis and verification of the safety and reliability of the positioning system for ADS through scenario-based evaluation. The KING framework, which automatically generates safety-critical scenarios by adversarially adjusting the trajectories of background vehicles, was proposed in [12]. The method uses kinematic gradients to modify adversarial background trajectories and uses the generated data to enhance the risk avoidance and generalization performance of the agent.

Existing studies [8–12] have mainly focused on single environmental variables (e.g., weather or road structure) or on verifying ADS performance through random or generated scenario-based simulations. In contrast, this study extends both the scope and realism of scenario-based evaluation by incorporating (1) real-world road environments, (2) sequential and combined adverse condition transitions, and (3) the integration of virtual infrastructures. Therefore, our work is distinguished from prior frameworks and provides a novel approach for validating ADS performance degradation under complex and diverse hazardous situations.

Therefore, evaluation scenarios should be designed by considering various realistically possible adverse conditions. In particular, adverse conditions that extend beyond the operational design domain (ODD) pose direct threats to the perception and localization capabilities of sensors in autonomous vehicles. The authors of [13] presented various adverse conditions and their relationships with the ODD in an autonomous driving environment.

3. Scenario-based Framework

3.1. Adverse Condition Secnarios

To evaluate the robustness of ADS under adverse conditions, we propose a scenario-based simulation framework that emphasizes realism, sequential hazards, and reproducibility.

First, the framework is grounded in real-world road environments, including the Gwangan Bridge and the Sinsundae Underpass in Busan, and the AVTR in Daegu, which is a dedicated proving ground for autonomous driving validation. Second, the framework introduces sequential transitions between adverse conditions, such as GNSS loss inside a tunnel followed immediately by heavy rainfall upon exit. These transitions reproduce compounded hazards frequently encountered in real driving environments. Third, the framework was implemented in the CARLA simulator with ROS integration, enabling modularization, scalability, and reproducibility. Each scenario is defined using a situation–action–event structure, allowing systematic description and extension. Finally, the adverse conditions were aligned with the ODD. While the ODD represents the safe operating boundaries of an ADS, each scenario intentionally introduces violations (e.g., GNSS unavailability, reduced visibility) to assess the safety margins of the system.

Systematically defining various adverse conditions that may be encountered on actual roads is vital for evaluating the safety and reliability of the positioning system for ADS. Such adverse conditions serve as key elements in evaluation scenarios, because they directly affect not only environmental factors but also the perception and localization functions of ADS sensors. Therefore, in this study, adverse condition evaluation scenarios are defined as situations that may pose threats to safety during vehicle operation.

The scenario construction process was approached from two perspectives. The first was to define the conditions or environments that induced risks, and the second was to use simulation software to implement situations in which the performance of sensors in autonomous vehicles was degraded. Through this comprehensive approach, various potential threat situations that can occur on real roads can be effectively modeled.

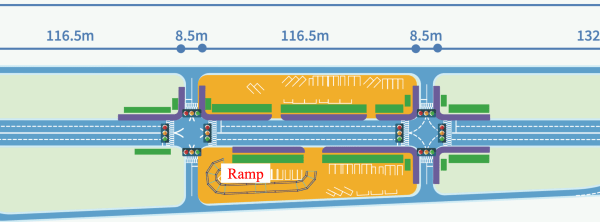

Figure 1 shows the KIAPI AVTR map used for the driving tests under adverse conditions in this study.

Figure 2 presents (a) the actual road conditions of the Sinsundae Underpass and (b) its simulator implementation.

Figure 2: Simulation environment of Sinsundae Underpass

Figure 3 shows (a) the real-world appearance of the lower section of the Gwangan Bridge and (b) its implementation in the simulator.

Figure 3: Simulation environment of the lower section of Gwangan Bridge.

The adverse conditions addressed in this study focus on situations that directly pose risks to the localization and sensor perception capabilities of autonomous vehicles. For example, the simulation includes situations in which the GNSS signal is completely lost when entering tunnels or underpasses, or where vehicle positioning accuracy is significantly degraded due to GNSS signal blockage in areas densely surrounded by tall buildings or beneath bridges. In addition, under severe weather conditions such as dense fog and heavy rainfall, the increase in airborne particles makes it difficult for camera sensors to perceive the external environment, thereby reducing the reliability of image-based object detectors. LiDAR sensors experience a loss of data points and decreased signal strength due to the scattering and attenuation of laser signals by airborne particles. Similarly, radar sensors may also suffer from diminished overall perception performance because of electromagnetic signal absorption and scattering under heavy rainfall. Furthermore, blind spots caused by surrounding vehicles or road structures may limit the field of view of camera, LiDAR, and radar sensors, which can significantly reduce the accuracy of precise localization and the detection of key targets.

Therefore, the scenarios considered in this study consist of the definition of hazardous environments and the software simulation of sensor performance degradation. For example, signal loss or partial blockage when entering tunnels or underpasses, degradation of camera and sensor performance due to dense fog or heavy rain, and blind spots caused by surrounding vehicles and structures.

Adverse conditions can also be extended to dynamic scenarios, allowing the continuous implementation of sequential hazards. For example, complex risk situations can be created, such as sudden loss of GNSS signals while entering an underpass during normal road driving or immediate exposure to heavy rainfall after passing through a tunnel. This approach reflects problematic situations that frequently occur in real road environments and plays a critical role in evaluating the practical response capabilities of the positioning system for ADS. By implementing these adverse conditions within a simulator environment, this study aims to provide foundational data for enhancing the safety and reliability of the positioning system for ADS.

These adverse conditions can be extended not only to static situations but also to dynamic scenarios involving environmental changes. For example, scenarios in which multiple adverse conditions occur sequentially, such as sudden loss of GNSS signals at specific locations, including tunnel entrances during normal road driving, and continuous exposure to rainfall immediately after exiting an underpass or tunnel, were implemented. The implementation of such complex risk situations reflects circumstances that frequently arise during real-world driving and plays a significant role in assessing the practical response capabilities of the positioning system for ADS.

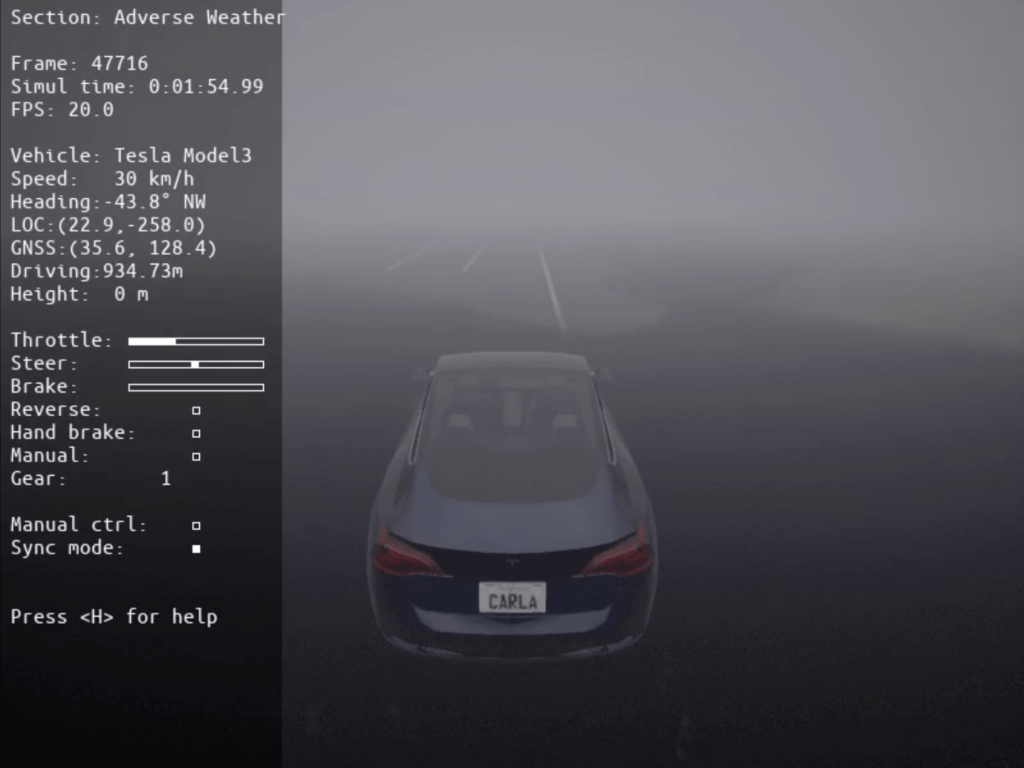

The primary adverse conditions implemented in the simulator for this study are presented in Figures 4–8.

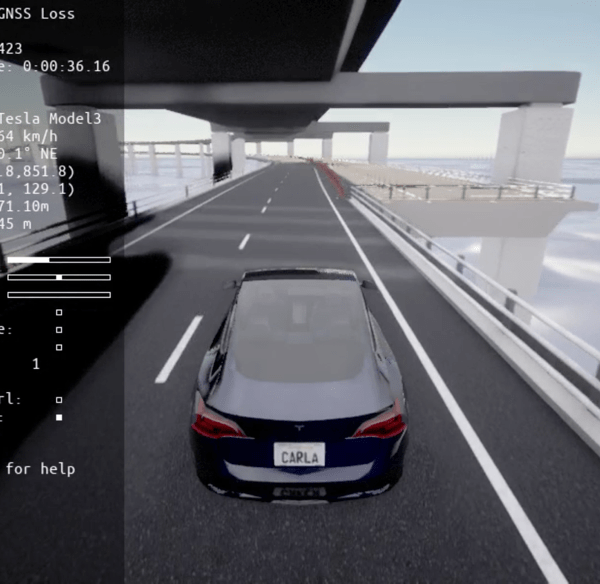

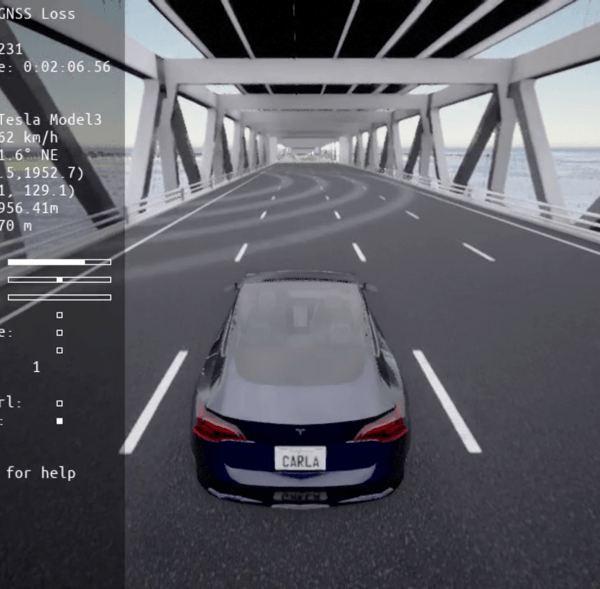

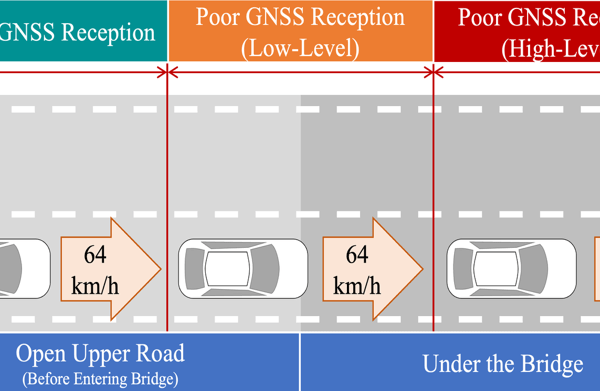

Figure 4 shows the lower section of the Gwangan Bridge, where the bridge structure can partially block GNSS signals or cause multipath effects. Thus, both the strength of the received signals and the number of visible satellites decrease, significantly increasing the positioning errors compared with those under normal conditions. This scenario realistically reproduces adverse conditions commonly encountered in such environments.

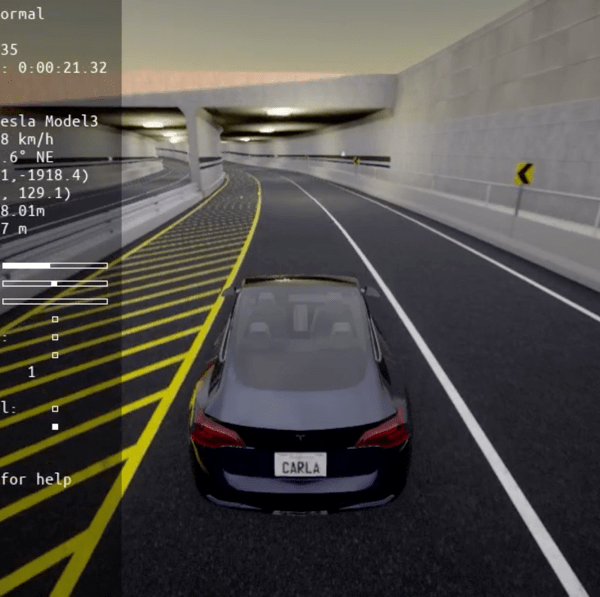

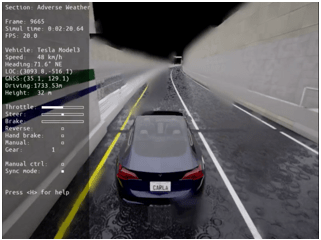

Figure 5 illustrates potential conditions encountered inside underpasses and tunnels, where the structure completely blocks GNSS signals, creating an extreme environment in which the ADS cannot rely on any external positioning information. Consequently, vehicle localization must depend on alternative methods such as sensor fusion, onboard inertial measurement units, and wheel odometry, all of which are subject to rapid accumulation of drift errors. If a vehicle travels for an extended period in a tunnel or underpass without appropriate correction signals, there is a significant risk of a substantial decrease in the overall driving safety and the reliability of path planning for the ADS.

Figure 6 shows a simulated situation in which dense fog significantly increases the concentration of fine particles in the atmosphere, thereby significantly degrading both the visibility and signal quality of camera and LiDAR sensors. Consequently, the reliability of image-based object detection decreases rapidly, and the signal strength of LiDAR data is markedly reduced. Camera sensors experience a higher probability of object detection failure due to limited visibility and decreased contrast, whereas LiDAR sensors are subject to reduced data points and an increased risk of false detections caused by signal attenuation and scattering.

Figure 7 shows the complex hazardous conditions of heavy rain, where the perception performance of not only optical sensors, such as cameras and LiDAR, but also radar sensors is generally degraded. Cameras experience reduced visibility and image distortion, leading to a sharp decline in the reliability of object recognition. LiDAR sensors suffer from significant decreases in valid data points and signal strength due to the scattering and attenuation of laser signals by raindrops. Radar sensors also encounter issues under heavy rain, such as increased noise and weakened reflected signals, resulting in reduced accuracy in distance and velocity measurements.

Ultimately, these limitations lead to a significant decrease in the perception and decision-making reliability of the positioning system for ADS, thereby threatening safe vehicle operation.

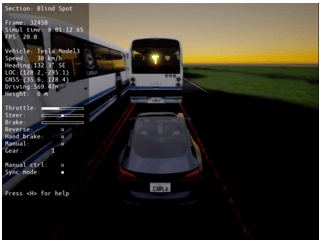

Figure 8 shows a blind spot scenario that simulates real-world hazardous situations in which the fields of view of the primary sensors of an autonomous vehicle, such as cameras, LiDAR, and radar, are temporarily obstructed by large surrounding vehicles or road structures. Consequently, the vehicle faces significant constraints in detecting objects, identifying overtaking vehicles, and recognizing pedestrians within certain areas, and its localization and obstacle avoidance accuracy are also significantly reduced. Sensor occlusion may lead to failure to detect critical hazards such as overtaking vehicles, unexpected obstacles, and pedestrians, posing a direct threat to the decision-making and driving safety of the autonomous system. In real road environments, such blind spots can frequently occur at signalized intersections, bridges, underpasses, and other complex settings, making the precise implementation and evaluation of such scenarios essential.

The degradation in the perception performance of all sensors can critically affect the core functions of the positioning system for ADS, including object detection and lane recognition, and may be a major cause of increased accident risk in real-world scenarios. Therefore, each scenario was designed to comprehensively reflect adverse conditions that may occur in real road environments, enabling a precise evaluation of the changes in the perception and localization capabilities of the positioning system for ADS in extreme circumstances.

3.2. Scenario set

The proposed driving safety scenarios comprise three key elements: situation, action, and event.

The first element, situation, refers to the specific problem or challenge encountered by the autonomous vehicle. For example, this may involve the loss of GNSS signals upon entering a tunnel or the degradation of camera and LiDAR perception performance caused by dense fog. This element is designed to effectively reproduce the impact of particular adverse conditions on the sensor reliability and perception capability of the positioning system for ADS.

The second element, action, represents the physical driving environment in which the autonomous vehicle operates, including external driving conditions such as road structure, speed limits, and route characteristics. The effects of specific adverse conditions were observed under simplified road conditions, excluding dynamic objects such as pedestrians and other vehicles.

The third element, event, defines the timing and conditions under which adverse conditions occur. For example, the scenario may specify a sudden onset of fog during travel on a straight road segment or the beginning of heavy rainfall immediately before entering an intersection. These events are explicitly set within the scenario to reflect realistic temporal and spatial conditions in the simulation, thereby enabling the precise evaluation of the response of the autonomous system.

These three elements are organically combined to allow for the systematic and extensible construction of various adverse conditions, providing a practical evaluation framework for verifying the safety and reliability of the positioning system for ADS in real road environments.

This study developed five sequential adverse condition driving scenarios (Scenarios 1–5) using the three elements.

- Scenario 1 (Underbridge_GNSSLoss): The vehicle travels under the lower deck of the Gwangan Bridge, experiencing partial GNSS signal loss.

Table 1 presents the definition of Scenario 1 in terms of its three components: situation, action, and event.

Table 1: Definition of Scenario 1 – The scenario describes partial GNSS signal loss when the vehicle enters the lower section of the Gwangan Bridge while driving under clear weather conditions.

| Definition | Description |

| Situation | The vehicle travels on a regular road under clear weather and then enters the lower section of a multilevel bridge, resulting in a partial loss of GNSS signals. |

| Action | The vehicle maintains a speed of approximately 64 km/h while driving in the second lane of both the regular road and the lower section of the bridge. |

| Event | The vehicle enters the lower section of the bridge, which is a GNSS shadow zone, from the regular road and continues driving with partial loss of GNSS signals. |

Figure 9 visualizes Scenario 1, where an autonomous vehicle drives through the lower section of the Gwangan Bridge and experiences partial or complete loss of GNSS signals, resulting in restricted navigation.

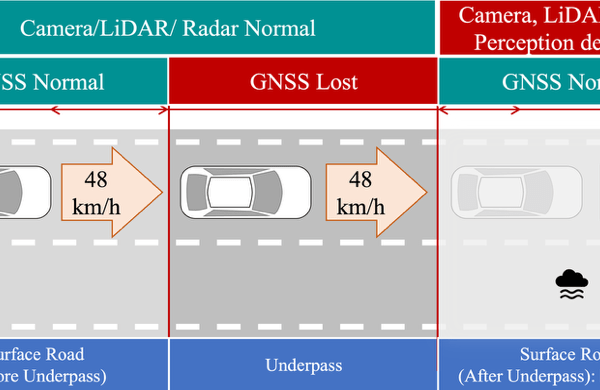

- Scenario 2(Underpass_HeavyFog): GNSS loss occurs inside an underpass, and dense fog immediately after exit degrades camera/LiDAR performance

Table 2 presents the definition of Scenario 2 in terms of its three components: situation, action, and event.

Table 2: Definition of Scenario 2 – The scenario reproduces GNSS signal loss inside an underpass followed by dense fog immediately after exit, degrading the perception performance of camera and LiDAR sensors.

| Definition | Description |

| Situation | 1. While driving on a regular road under clear weather, the vehicle enters an enclosed underpass, resulting in the loss of GNSS signals.

2. After passing through the underpass and returning to the regular road, GNSS signals are restored; however, dense fog occurs in this section, limiting the perception performance of camera and LiDAR sensors. |

| Action | The vehicle maintains the first lane of both the regular road and the underpass while driving at approximately 48 km/h. |

| Event | Upon entering the underpass from the regular road, GNSS signal loss occurs, and immediately after exiting the underpass, the vehicle drives under heavy fog, resulting in limited perception by the camera, LiDAR, and radar sensors. |

Figure 10 shows a step-by-step visualization of a scenario in which an autonomous vehicle drives through a sequential adverse environment, encountering dense fog immediately after passing through an underpass in Scenario 2.

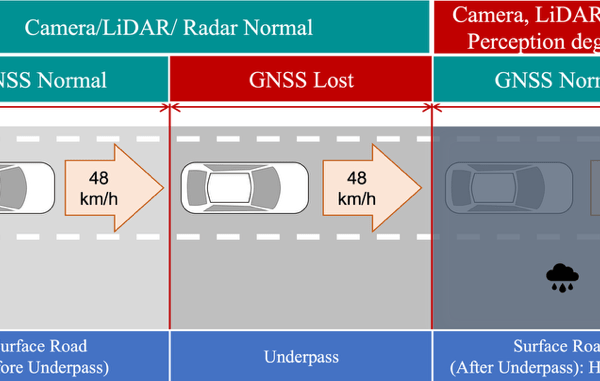

- Scenario 3 (Underpass_HeavyRain): Similar to Scenario 2, but heavy rainfall follows the underpass exit, limiting camera, LiDAR, and radar perception.

Table 3 presents the definition of Scenario 3 in terms of its three components: situation, action, and event.

Table 3: Definition of Scenario 3 – The vehicle experiences GNSS signal loss while driving inside an underpass, and upon exit, heavy rainfall limits the perception of cameras, LiDAR, and radar.

| Definition | Description |

| Situation | 1. The vehicle is driving on a regular road under clear weather and then enters an enclosed underpass, resulting in the loss of GNSS signals.

2. After passing through the underpass and returning to the regular road, GNSS signals are fully restored. However, in this section, heavy rainfall occurs, limiting the perception performance of key sensors such as cameras, LiDAR, and radar. |

| Action | The vehicle maintains the first lane of both the regular road and the underpass while driving at approximately 48 km/h. |

| Event | Upon entering the underpass from the regular road, GNSS signal loss occurs, and immediately after exiting the underpass, the vehicle drives under heavy rain, resulting in limited perception by the camera, LiDAR, and radar sensors. |

Figure 11 shows a step-by-step visualization of a driving scenario in which an autonomous vehicle encounters heavy rainfall immediately after passing through an underpass.

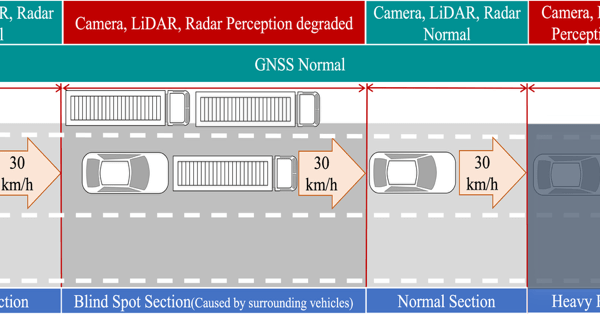

- Scenario 4(AVTR_BlindSpot_HeavyRain): Blind spots created by surrounding vehicles on the AVTR test road are followed by heavy rainfall, compounding sensor perception challenges.

Table 4 presents the definition of Scenario 4 in terms of its three components: situation, action, and event.

Table 4: Definition of Scenario 4 – Blind spots caused by surrounding vehicles are followed by heavy rainfall on the KIAPI AVTR test road, jointly reducing the perception capabilities of major sensors.

| Definition | Description |

| Situation | While driving on the AVTR, blind spots are created by other vehicles, which limits the perception performance of the camera, LiDAR, and radar sensors. |

| Action | The vehicle maintains the first lane of two available lanes while driving at approximately 30 km/h. |

| Event | After passing through the blind spot section, the vehicle continues in a section in which normal sensor perception is possible. However, heavy rainfall subsequently occurs, again limiting the perception performance of the camera, LiDAR, and radar sensors. |

Figure 12 illustrates, step by step, a driving scenario in which blind spots and heavy rainfall occur sequentially on the AVTR within the proving ground.

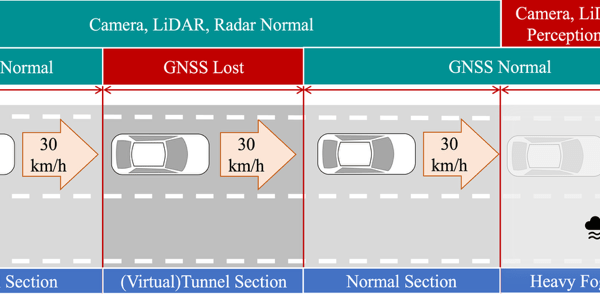

- Scenario 5 (AVTR_VTunnel_HeavyFog): A virtual tunnel on the AVTR causes GNSS signal loss, followed by dense fog upon exit, enabling the evaluation of consecutive hazards not reproducible with existing infrastructure.

Table 5 presents the definition of Scenario 5 in terms of its three components: situation, action, and event.

Table 5: Definition of Scenario 5 – A virtual tunnel on the KIAPI AVTR test track induces GNSS signal loss, followed by dense fog upon exit, enabling the evaluation of ADS under consecutive adverse conditions.

| Definition | Description |

| Situation | In this scenario, the vehicle enters a (virtual) tunnel section while driving on the AVTR, resulting in GNSS signal loss. |

| Action | The vehicle maintains the first lane of two available lanes while driving at approximately 30 km/h. |

| Event | After exiting the tunnel section and continuing on a normal road segment, the vehicle encounters heavy fog, which limits the perception performance of the camera, LiDAR, and radar sensors. |

Figure 13 shows a step-by-step visualization of a driving scenario in which a virtual tunnel and dense fog occur sequentially on the AVTR within the proving ground. The virtual tunnel included in this scenario does not physically exist on the AVTR test road. Instead, this element was intentionally designed and integrated as a virtual feature to facilitate the implementation of adverse condition scenarios. The incorporation of a virtual tunnel enables the evaluation of the positioning system for ADS under consecutive adverse conditions, such as GNSS signal loss and subsequent sensor performance degradation, that cannot be easily reproduced with the existing road infrastructure. By introducing such virtual environments, this study expands the range of testable scenarios and enhances both the flexibility and comprehensiveness of safety and reliability assessments for autonomous vehicles.

4. Experiment

4.1. Environments

In this study, five sequential adverse condition scenarios were simulated. In particular, to enhance the realism of adverse conditions, the effects of airborne particulate matter, such as particles under heavy rainfall and dense fog, on sensor performance were quantitatively incorporated. The heavy rainfall scenario was defined as a situation with a precipitation rate of 30 mm/h or higher, and the dense fog scenario was characterized by a visibility of 100 m or less (Table 6). Under these conditions, the degradation of sensor performance was applied stepwise. For LiDAR sensors, the signal strength was set to approximately 35% of normal levels, and the number of points was set to 85% under dense fog conditions. Under heavy rainfall, the signal strength and number of points were reduced to 60% and 75%, respectively. For radar sensors, only the heavy rainfall condition was considered, with the number of points limited to 85% of normal values.

Table 6: LiDAR sensor intensity and point drop rate under adverse conditions

| Sensor | Dense Fog | Heavy Rain | ||

| Sensor | Intensity | Point drop rate | Intensity | Point drop rate |

| LiDAR | 35% | 15% | 60% | 25% |

These changes in sensor quality were detected and applied in real time through the CARLA simulator and the ROS control module, effectively simulating the degradation of sensor reliability caused by environmental changes encountered on actual roads.

The simulation vehicle was equipped with a camera, depth camera, LiDAR, radar, and GPS sensors. Parameters such as the position, orientation, and characteristics of each sensor were defined in the CARLA environment using the JSON (JavaScript Object Notation) format. This approach enabled flexible experimental application of various sensor configurations and conditions.

4.2. Simulation

The CARLA open-source autonomous driving simulator was used in this study to realistically implement sequential adverse condition scenarios. CARLA can generate sensor data for autonomous driving, including cameras, LiDAR, and GPS, under various weather, time, and traffic conditions, and allows real-time monitoring and control of virtual vehicle operations.

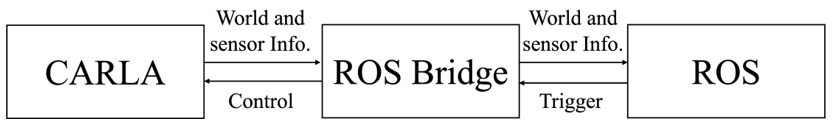

As shown in Figure 14, the system architecture comprises CARLA, the ROS Bridge, and ROS. The ROS Bridge manages communication between CARLA and ROS, publishing sensor data and vehicle state information generated by CARLA as ROS topics. Conversely, control commands generated in ROS are transmitted to CARLA. ROS is an open-source framework for robot software development that manages various hardware and software modules as nodes and exchanges sensor data and control commands via messages.

The CARLA simulator enables the effective implementation of various adverse conditions such as fog, heavy rain, and nighttime, allowing for the design of scenarios that closely resemble real road environments. However, CARLA does not automatically reflect sensor signal degradation or data loss under adverse conditions. Therefore, in this study, control logic was implemented in the ROS nodes to artificially degrade sensor data quality by injecting noise, dropping data, blocking signals, or switching sensors to an OFF state when an adverse condition is triggered. Each scenario was modularized using the Python API, making it easy to add new conditions and conduct repeated experiments.

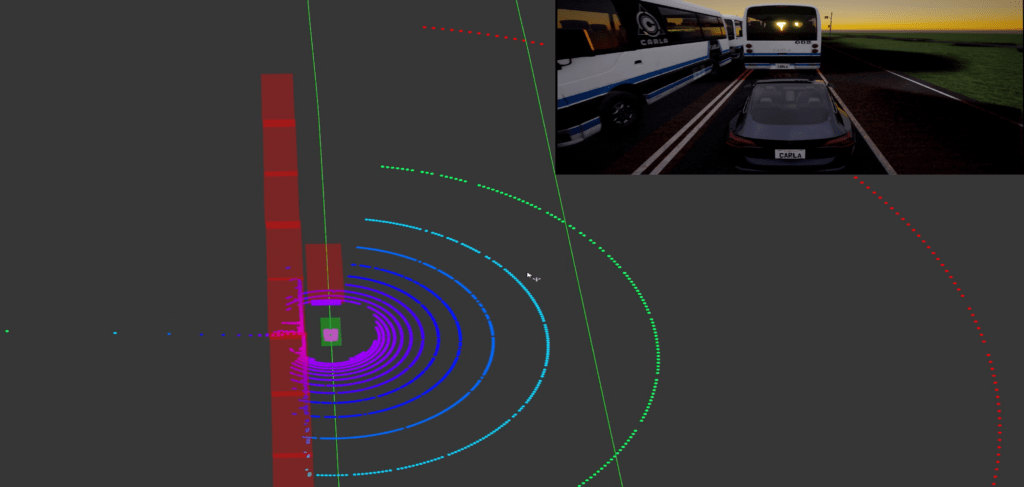

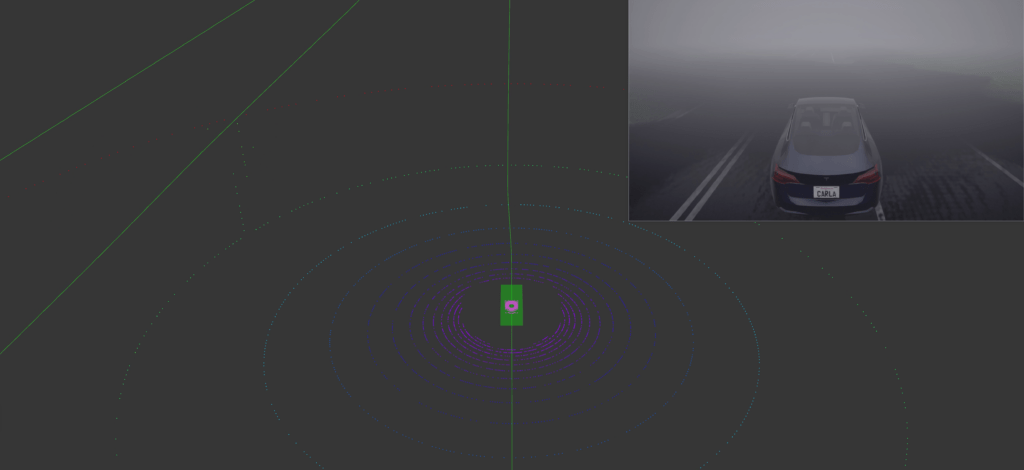

Through the proposed sequential adverse condition simulation framework, various hazardous scenarios that may occur on real roads can be dynamically reproduced, allowing observation of the effect of sensor performance degradation on the driving and localization performance of ADS. Actual examples of sensor performance degradation under adverse conditions are presented in Figure 15. Figure 15(a) shows a simulation of the degraded perception performance of camera and LiDAR sensors due to blind spots caused by surrounding vehicles in Scenario 4. Figure 15(b) illustrates performance degradation under heavy fog conditions in Scenario 5. Both cases are visualized using the RVIZ tool.

For reference, the simulations were performed on a workstation equipped with an Intel i9 CPU and an NVIDIA RTX TITAN GPU. The software environment comprised the CARLA simulator (version 0.9.15) integrated with ROS, and all simulation and control modules were implemented in Python (version 3.8).

Figure 15: Examples of sensor performance degradation under adverse conditions

5. Discussion

The proposed sequential adverse condition autonomous driving scenarios were designed considering scalability and openness to serve as benchmarks for various future research and industrial applications. The proposed scenarios are not limited to specific road environments or sensor configurations; rather, they feature a modular structure that allows the addition of new road types, sensor combinations, and driving conditions. Therefore, existing scenarios can be efficiently modified and extended to accommodate diverse requirements.

In addition, the proposed scenarios can serve as a practical standard for evaluating the reliability and safety of the positioning system for ADS. By comprehensively reflecting realistic and diverse hazardous situations, these scenarios provide value as a standard benchmark for the safety evaluation and certification of autonomous driving technologies. If used as a standardized platform for performance comparison and validation among the research community and industry, the proposed scenarios can facilitate objective measurement of technological advancements and promote efficient improvements.

The findings of this study may be provided as open scenarios for real-world autonomous driving evaluation in the future. These scenarios can serve as a basis for assessing the performance of the positioning system for ADS and deriving improvements, thereby making a practical contribution to the advancement of autonomous driving technologies. In particular, sequential adverse condition autonomous driving scenarios are expected to serve as foundational data for future research on driving safety evaluation and the development of risk mitigation algorithms.

This study has several limitations. First, the proposed scenarios were implemented in the CARLA simulator, and further validation in other simulation platforms is required to ensure general applicability. Second, the sensor degradation models were based on simplified probabilistic parameters (e.g., LiDAR intensity reduction and point drop rates), which may not fully capture the physical responses of real sensors under adverse conditions. Third, inevitable discrepancies exist between simulation and real-world driving; thus, pilot tests on actual roads are needed to enhance the realism and transferability of the results. In particular, the scenarios and sensor models were constructed for experimental purposes prior to in-vehicle deployment, which may limit their direct applicability to commercial ADS.

Furthermore, although the importance of quantitative ADS performance metrics (e.g., localization error, detection rate, sensor fusion reliability) is well recognized, such values were not directly measured in this study. This is because sensor degradation was manually defined and injected into the simulation rather than observed from an operational ADS. Accordingly, the main contribution of this work lies in reproducing diverse sequential adverse conditions and providing a reproducible environment that can serve as a testbed for future performance evaluation. As future work, we plan to integrate real ADS algorithms into the framework to quantitatively analyze performance degradation under the proposed scenarios.

6. Conclusion

In this study, we developed scenarios that systematically reproduce adverse conditions, which have recently emerged as critical factors in evaluating the reliability and safety of the positioning system for ADS. Considering the degradation of sensor performance in realistic adverse conditions, such as the lower sections of bridges, underpasses, fog, heavy rain, and blind spots, we simulated the Gwangan Bridge and Sinsundae Underpass in Busan and the AVTR at the KIAPI proving ground in Daegu in the CARLA simulator environment.

This study designed concrete and realistic scenarios that encompass various real-world adverse conditions. The modularity and scalability of each scenario were ensured using CARLA, a representative autonomous driving simulator, enabling the establishment of a repeatable and consistent evaluation environment. The operation of each scenario was thoroughly validated in the simulation environment, providing a reliable platform for the positioning system for ADS evaluation.

These scenarios have significant value as standard benchmarks for assessing the safety and reliability of the positioning system for ADS in future research and industrial applications. This study contributes to the advancement of autonomous driving technologies and the activation of related research by making these scenarios available as open datasets. Furthermore, we plan to continuously expand the scope of the scenarios by adding diverse road types, sensor configurations, and driving conditions, thereby contributing to the comprehensive and practical evaluation and enhancement of autonomous driving technologies.

Acknowledgment

This work was supported by the Technology Innovation Program (20018198, Development of Hyper self-vehicle location recognition technology in the driving environment under bad conditions) funded by the Ministry of Trade, Industry, and Energy (MOTIE, Korea).

- N. Kalra, S. M. Paddock, “Driving to safety: How many miles of driving would it take to demonstrate autonomous vehicle reliability?” Transportation Research Part A, 94, 182–193, 2016, doi: 10.1016/j.tra.2016.09.010.

- A. Dosovitskiy, G. Ros, F. Codevilla, A. Lopez, V. Koltun, “CARLA: An open urban driving simulator”, CoRL, 2017, doi: 10.48550/arXiv.1711.03938.

- H. Caesar, V. Bankiti, A. H. Lang, S. Vora, V. E. Liong, Q. Xu, A. Krishnan, Y. Pan, G. Baldan, O. Beijbom, “nuScenes: A multimodal dataset for autonomous driving”, in Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, 11621–11631, 2020, doi: 10.1109/CVPR42600.2020.01164.

- F. Codevilla, E. Santana, A. Lopez, A. Gaidon, “Exploring the limitations of behavior cloning for autonomous driving”, in International Conference on Computer Vision (ICCV), 9328–9337, 2019, doi: 10.1109/ICCV.2019.00942.

- Y. Zhang, A. Carballo, H. Yang, K. Takeda, “Autonomous driving in adverse weather conditions: A survey”, arXiv preprint arXiv:2112.08936, 2021, doi: 10.1016/j.isprsjprs.2022.12.021.

- S. Zang, M. Ding, D. Smith, P. Tyler, T. Rakotoarivelo, M. A. Kaafar, “The impact of adverse weather conditions on autonomous vehicles: How rain, snow, fog, and hail affect the performance of a self-driving car”, IEEE Vehicular Technology Magazine, 14, 103–111, 2019, doi: 10.1109/MVT.2019.2892497.

- D. Neumeister, D. Pape, “Automated vehicles and adverse weather: Final report”, U.S. Department of Transportation, Federal Highway Administration, June 2019. Available: www.its.dot.gov/index.htm

- R. Li, T. Qin, C. Widdershoven, “ISS-Scenario: Scenario-based testing in CARLA”, in Theoretical Aspects of Software Engineering (TASE), 279–286, 2024, doi: 10.1007/978-3-031-64626-3_16.

- M. Čávojský, E. Šlapak, M. Dopiriak, G. Bugar, J. Gazda, “3CSim: CARLA corner case simulation for control assessment in autonomous driving”, arXiv preprint arXiv:2409.10524, 2024, doi: 10.48550/arXiv.2409.10524.

- B. Osiński, P. Milos, A. Jakubowski, P. Zięcina, M. Martyniak, C. Galias, A. Breuer, S. Homoceanu, H. Michalewski, “CARLA real traffic scenarios – novel training ground and benchmark for autonomous driving”, arXiv preprint arXiv:2012.11329, 2020, doi: 10.48550/arXiv.2012.11329.

- D. J. Fremont, E. Kim, Y. V. Pant, S. A. Seshia, A. Acharya, X. Bruso, P. Wells, S. Lemke, Q. Lu, S. Mehta, “Formal scenario-based testing of autonomous vehicles: From simulation to the real world”, in International Conference on Intelligent Transportation (ITSC), 1–8, 2020, doi: 10.1109/ITSC45102.2020.9294368.

- N. Hanselmann, K. Renz, K. Chitta, A. Bhattacharyya, A. Geiger, “KING: Generating safety-critical driving scenarios for robust imitation via kinematics gradients”, in Proceedings of the European Conference on Computer Vision, 333–350, 2022, doi: 10.1007/978-3-031-19839-7_20.

- H.-S. Cho, Y.-J. Park, M. Park, J. Son, “Study on designing scenarios to evaluate adverse condition positioning for highly reliable autonomous driving”, The Transactions of the Korean Society of Automotive Engineers, 31, 1021–1037, 2023, doi: 10.7467/KSAE.2023.31.12.1021.

- Hassan Facoiti, Ahmed Boumezzough, Said Safi, "Computer Vision Radar for Autonomous Driving using Histogram Method", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 4, pp. 42–48, 2022. doi: 10.25046/aj070407

- Yohei Yamauchi, Mitsuyuki Saito, "Adaptive Identification Method of Vehicle Model for Autonomous Driving Robust to Environmental Disturbances", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 710–717, 2020. doi: 10.25046/aj050685