AI-Based Photography Assessment System using Convolutional Neural Networks

Volume 10, Issue 2, Page No 28–34, 2025

Adv. Sci. Technol. Eng. Syst. J. 10(2), 28–34 (2025);

DOI: 10.25046/aj100203

DOI: 10.25046/aj100203

Keywords: Automated assessment, Deep learning, Convolutional neural networks, Education Technology, Image classification, AI in education

Providing timely and meaningful feedback in photography education is challenging, particularly in large classes where manual assessment can delay skill development. This paper presents M-Stock, an AI-based automated photo evaluation system that uses Convolutional Neural Networks (CNNs) to assess student photography assignments on web browser. M-Stock evaluates both technical aspects (such as lighting, composition, and exposure) and creative elements, providing students with real-time, formative feedback. The system was trained on a diverse dataset, including student submissions and commercial standards, achieving an overall accuracy of 97.18% with an average prediction speed of 46.1 milliseconds per image. Experiments assessed the system’s performance across varying resolutions and batch sizes, confirming its scalability and suitability for real-time classroom use. Additionally, a pilot study with students indicated that M-Stock’s feedback positively impacted their technical skills and encouraged self-directed learning. The results demonstrate M-Stock’s potential as a transformative tool for photography education, combining high accuracy, immediate feedback, and pedagogical value to support continuous learning. Future improvements will focus on refining creative assessments and expanding the system’s applicability to other visual arts disciplines.

1. Introduction

In recent years, digital technology has revolutionized the way photography is taught, offering students unprecedented access to resources and tools for developing their skills. University-level courses on photography increasingly emphasize both theoretical knowledge and practical expertise, aiming to produce competent professionals equipped for the rapidly evolving media and creative industries [1]. However, as photography courses expand in scope and enrolment, especially in digital classrooms, educators face significant challenges in efficiently assessing student work [2]. The task of providing timely, meaningful feedback is often hindered by the volume of student submissions, which can delay the developmental process of photography skills [3].

Traditional assessment methods for photography assignments are often manual and time-consuming, leading to delays that can impede learning and limit student engagement.

Studies have highlighted that real-time feedback plays a significant role in accelerating skill acquisition in domains requiring both technical precision and creative expression [4]. Given this, automated assessment systems powered by artificial intelligence (AI) have emerged as promising tools for enhancing the learning experience. AI technologies, especially deep learning, have shown considerable potential in automating visual assessments, enabling more personalized, consistent, and timely feedback for students [5].

Despite these advancements, current AI-based assessment systems in photography education primarily focus on evaluating technical attributes, such as lighting, composition, and exposure, often overlooking the creative and subjective aspects critical to artistic development. Furthermore, many existing tools provide only summative feedback, offering a one-time evaluation rather than iterative feedback that supports continuous learning and improvement. Addressing these gaps requires an assessment platform that can balance both technical and creative evaluations while also offering formative, actionable feedback.

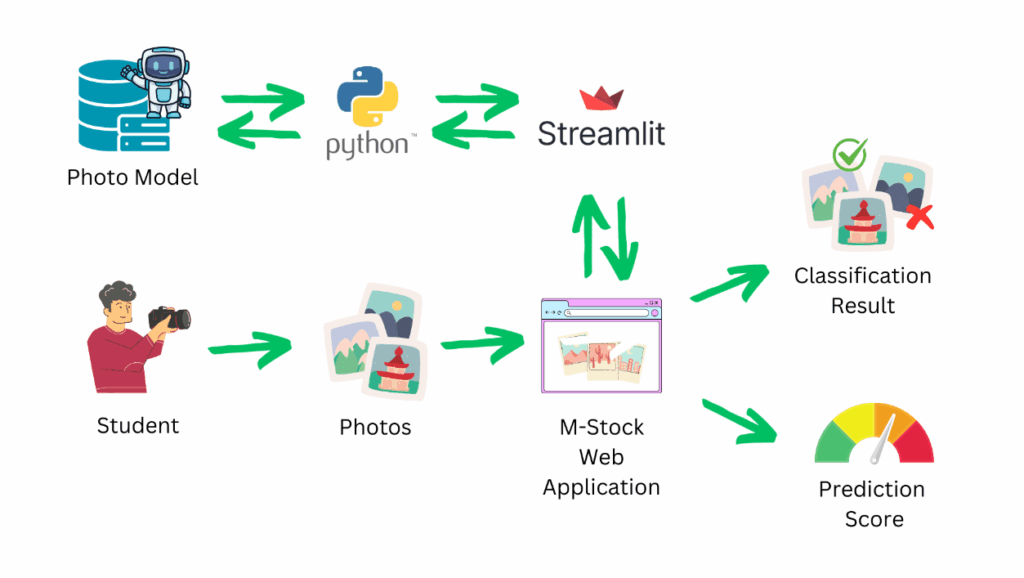

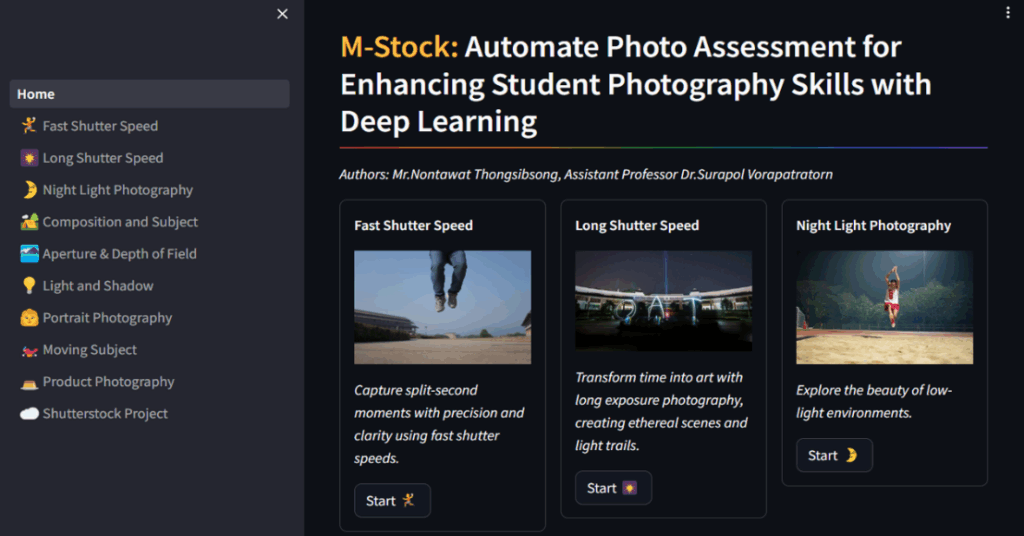

This paper introduces M-Stock (Mae Fah Luang University Photo Stock), an AI-driven automated photo evaluation platform designed to support student learning in photography by providing real-time feedback on both technical and artistic elements of their work. Using Convolutional Neural Networks (CNNs), M-Stock evaluates photographs based on predefined criteria developed in consultation with industry standards and educational experts, thus ensuring both relevance to the professional field and pedagogical value. In addition, M-Stock is built with scalability and ease of use in mind, allowing seamless integration into classroom environments where students can receive immediate feedback on their submissions. Overall structure of our proposed system as depicted in Figure 1.

2. Related Work

The integration of artificial intelligence (AI) in education has shown promising potential to enhance learning outcomes by providing personalized and adaptive feedback across various fields. In creative education, AI-driven assessment tools have been increasingly applied, yet challenges remain, particularly in domains like photography, where both technical and creative competencies are essential. This section reviews recent advancements in AI-supported educational systems, focusing on automated assessment in creative disciplines and identifying key gaps that the M-Stock system aims to address.

2.1. AI in Education for Automated Assessment

AI technologies, particularly deep learning, have transformed educational assessment by enabling automated grading and personalized feedback systems. These systems have proven effective in evaluating diverse student outputs, including essays, problem-solving exercises, and visual projects, providing more timely feedback than traditional methods. Adaptive learning environments and intelligent tutoring systems use AI to tailor educational content and assessment to individual learners’ needs, which has been shown to improve learning efficiency and engagement [6]. Furthermore, as 21st-century learning frameworks emphasize critical thinking, creativity, and lifelong learning [7], AI-based assessments must evolve to support these skills, especially in creative subjects like photography. Furthermore, AI technologies have been applied in the context of stock photography. Platforms such as Shutterstock and Adobe Stock have incorporated AI algorithms to evaluate the quality of images submitted by photographers, offering real-time feedback and ensuring that only images meeting commercial standards are accepted [8]. This use of AI for large-scale image evaluation highlights its potential for integration into photography education, where it can be used to assess student submissions and provide immediate feedback on technical aspects such as focus, lighting, and composition [9]. However, most existing systems in this category are optimized for structured and quantifiable tasks, such as quizzes and assignments that focus on objective metrics. This approach is limited in addressing subjective assessments, such as those required in photography education, which involve creative expression and aesthetic judgment.

2.2. Automated Assessment in Photography Education

In photography education, AI-based assessment tools have typically focused on evaluating technical attributes, such as exposure, sharpness, and composition. As professional photography requires both technical proficiency and artistic expression, it is critical that educational tools reflect industry standards and expectations [10]. Recent research by [11], has explored AI-supported assessment in photography, demonstrating the potential for Convolutional Neural Networks (CNNs) to classify images based on technical quality. Such systems provide valuable feedback for improving technical proficiency but often lack the capability to assess the creative and subjective qualities of an image. Moreover, many existing tools in photography education offer only summative feedback, which does not facilitate iterative improvement and skill refinement, both of which are critical for creative learning. Unlike these existing systems, M-Stock aims to bridge this gap by integrating both technical and creative evaluations, providing formative feedback that encourages continuous learning. The system’s feedback is designed not only to assess basic technical aspects but also to guide students in enhancing their artistic interpretation and aesthetic sensibilities, offering a more comprehensive educational experience.

2.3. Convolutional Neural Networks for Image Classification

Convolutional Neural Networks (CNNs) have emerged as a robust tool for image classification, widely applied in various fields, including medical imaging, autonomous driving, and creative media [12]. CNNs excel at identifying spatial hierarchies and features in visual data, making them well-suited for assessing technical quality in photography. While CNNs have demonstrated high accuracy in image classification, most studies in this area have focused on technical metrics without exploring how these models might be adapted to assess creative and subjective qualities in educational contexts. Other deep learning models, such as transformers and attention-based networks, have also shown success in visual tasks, providing an alternative to CNNs. However, CNNs remain the primary choice for this study due to their well-established efficiency and proven effectiveness in photography-related tasks. Future iterations of M-Stock could explore alternative models or ensemble approaches to further enhance its evaluative capabilities, particularly for assessing creativity.

2.4. Existing Gaps in Automated Photography Assessment

Despite the advances in AI-based assessment tools, significant gaps remain in the automated evaluation of creative student outputs. Most current systems excel at objective assessments, but they struggle to capture subjective elements, such as artistic style and emotional impact, which are essential in photography education [13]. Additionally, the lack of iterative, formative feedback in current photography assessment tools limits their effectiveness in supporting continuous skill development. The need for systems that can provide nuanced, ongoing feedback on both technical and creative elements of student work remains largely unmet. In response to these challenges, M-Stock was designed to provide a balanced approach to automated photography assessment, incorporating both technical and creative evaluations. By integrating AI-based formative feedback, M-Stock addresses the limitations of existing systems, offering students timely, constructive feedback that promotes self-directed learning and skill enhancement.

3. Proposed Method

The M-Stock system was developed to automate the assessment of student photography, providing a balanced evaluation that addresses both technical and creative aspects of students’ work. This section outlines the methodology used to design, implement, and evaluate the M-Stock system, focusing on data collection, model training, feedback mechanisms, and system architecture.

3.1. Data Gathering

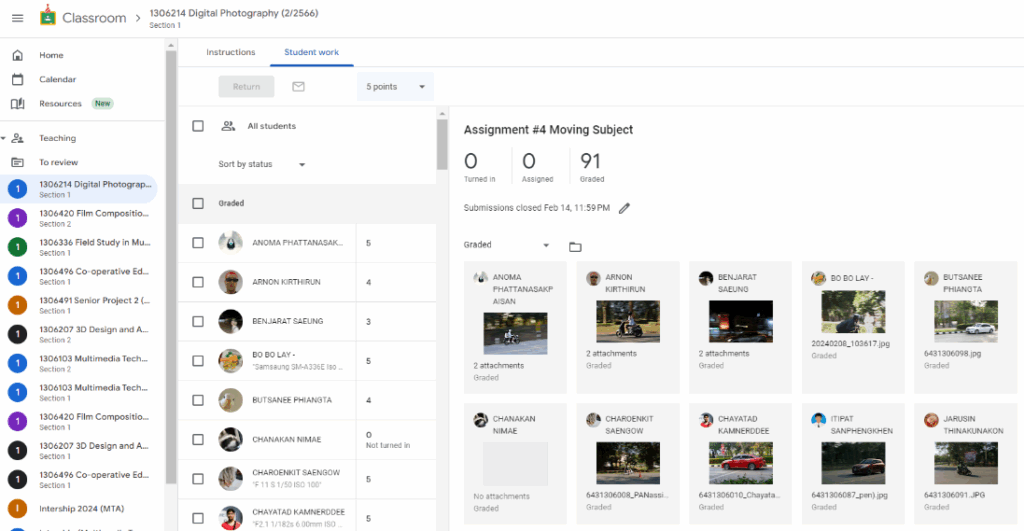

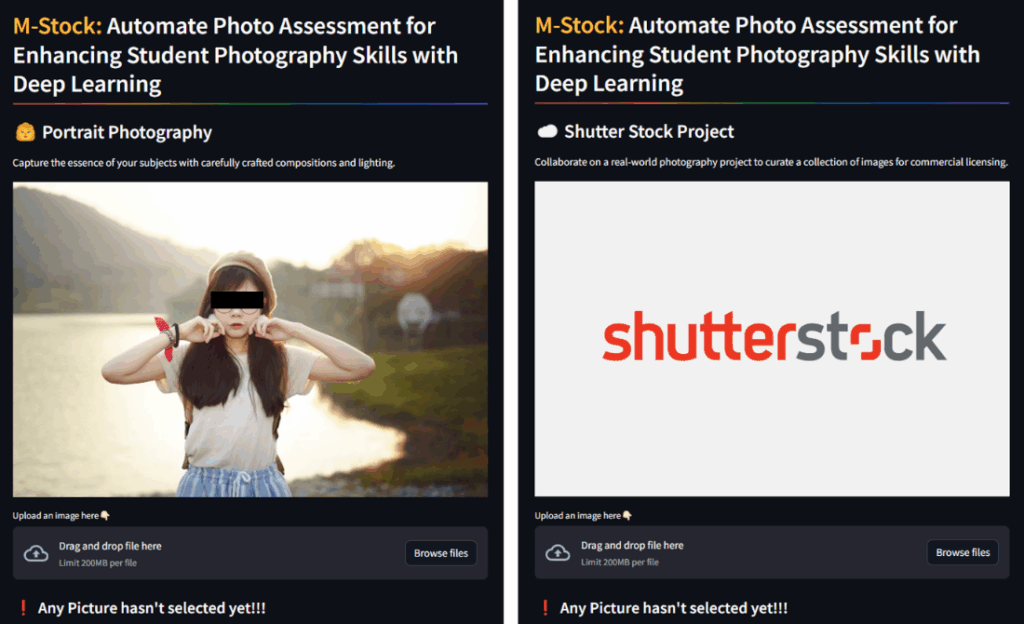

The M-Stock system’s training dataset combines images from two primary sources to cover diverse photography skills and quality levels: Student Assignments from Photography Courses: Images were collected from photography courses at Mae Fah Luang University. These assignments covered various topics such as fast shutter speed, long shutter speed, night light photography, composition and subject, aperture and depth of field, light and shadow, portrait photography, moving subjects, and product photography. The assignments were submitted via Google Classroom [14], and each image was categorized into three performance levels: Excellence, Good, and Bad, based on criteria established by instructors and photography experts as shown in Figure 2.

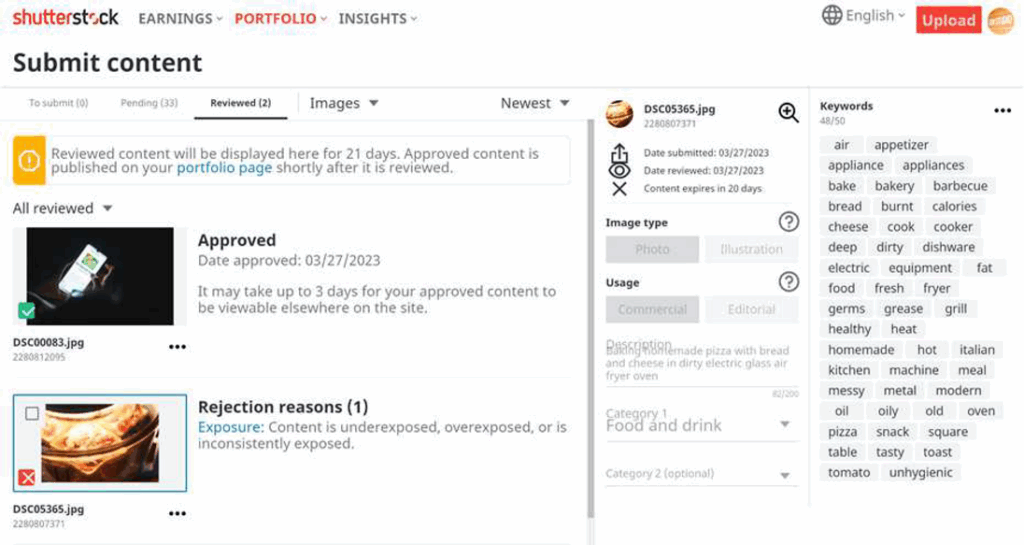

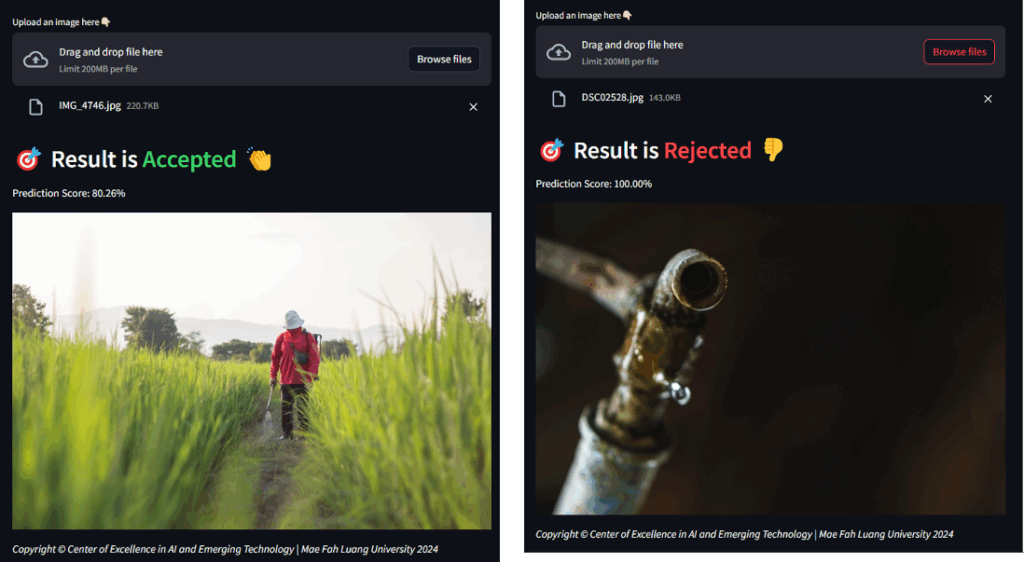

Commercial Standards from Shutterstock Submissions: To integrate professional criteria, the dataset includes student submissions to Shutterstock [15], labelled as either Accepted (commercially viable) or Rejected (commercially inadequate). This source introduces real-world standards into the model, making it robust for assessing quality in a manner that aligns with industry requirements as depicted in Figure 3. Images were stored in a server database, organized by assignment type and quality category. This collection strategy ensures that the M-Stock model can generalize well across different photography styles, skill levels, and educational contexts.

3.2. Model Training and Selection

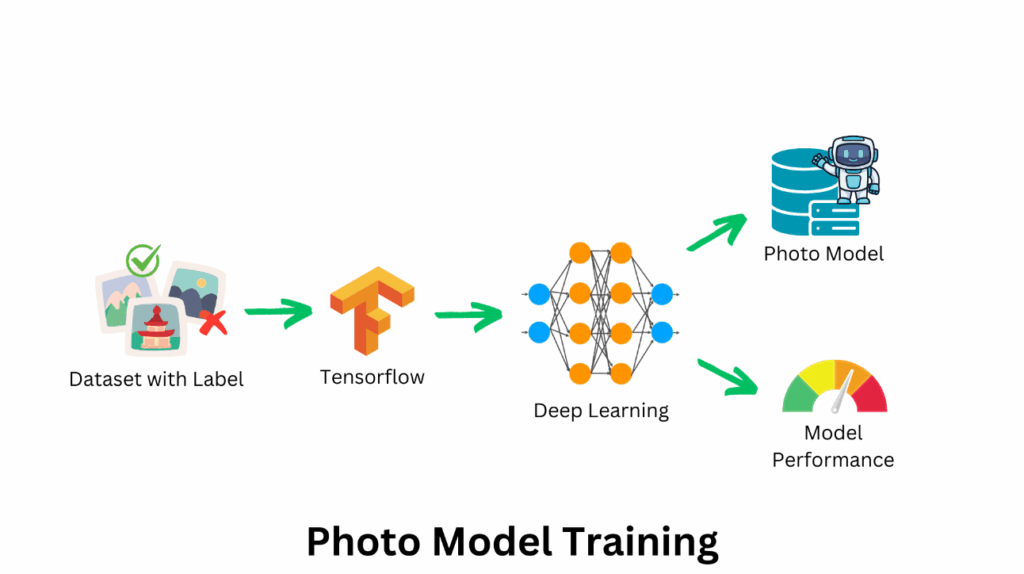

The M-Stock system utilizes Convolutional Neural Networks (CNNs) [16] due to their strong performance in visual data analysis and spatial feature extraction. CNNs were chosen over alternative models, such as transformers, because of their efficiency in handling complex image data with lower computational requirements, making them suitable for real-time feedback in educational environments. The CNN architecture includes multiple convolutional layers, ReLU activations, batch normalization, max-pooling layers, and fully connected layers. A final softmax classifier predicts image categories (e.g., Excellence, Good, Bad, Accepted, Rejected), as shown in Figure 4.

The training process involved the following steps:

Image Preprocessing: All images were resized to 800 x 800 pixels to maintain consistency, ensuring that the model could effectively extract meaningful features across various image types. Model Optimization: The Adam optimizer [17] was used to minimize the loss function (sparse categorical cross-entropy), ensuring that the model converged efficiently [18]. During training, performance metrics such as accuracy, prediction speed, and training time were monitored to evaluate the model’s effectiveness. During training, performance metrics such as accuracy, prediction speed, and training time were monitored to evaluate the model’s effectiveness. The training process was executed using Python 3.11.0 [19], Keras [20], and TensorFlow 2.13 [21]. Evaluation Metrics: In addition to accuracy, other metrics such as precision, recall, and F1 score were used to comprehensively assess the model’s effectiveness. These metrics are essential in ensuring that the system’s predictions are reliable across different types of assignments and quality levels.

3.3. Web Implementation and User Interface

The third component of the M-Stock system is the development of a user-friendly web application that allows students and instructors to interact with the model in real time. The web application was developed using Streamlit 1.31.0 [22], a Python-based framework that simplifies the deployment of machine learning models in web environments. Users initiate the M-Stock system by accessing the website via the URL http://datascience.mfu.ac.th/mstock/. The application’s user interface is designed to be intuitive, enabling students to submit their photographs for assessment quickly and easily, Figure 5 illustrates the user interface of the homepage.

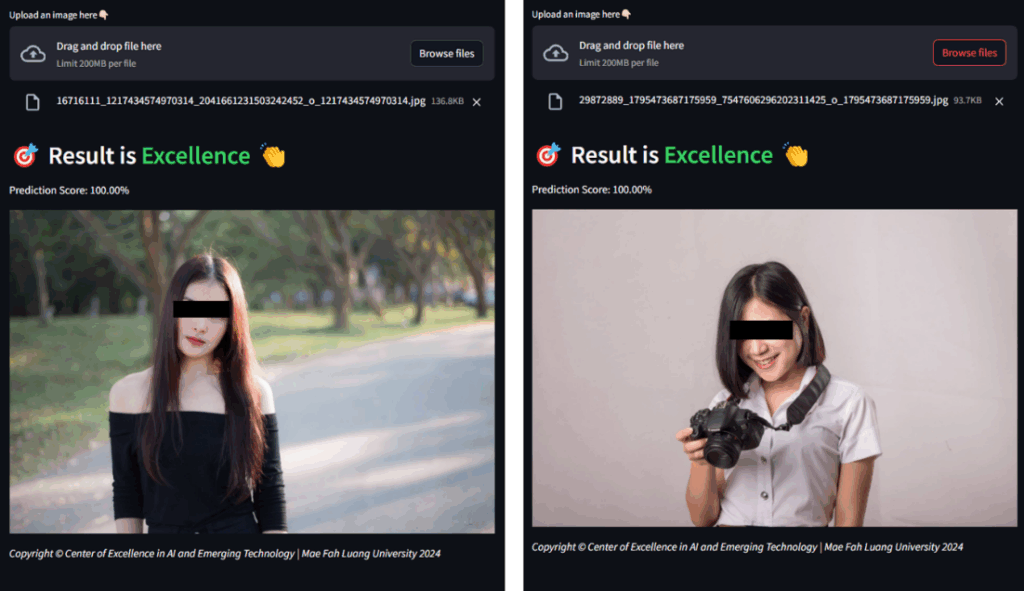

The submission process involves the following steps: Image Upload: Students select the assignment type and upload their photographs via the web interface. The system supports image formats such as JPG and PNG, with a maximum file size of 200 MB per image. Image Preprocessing and Classification: Once an image is uploaded, the system preprocesses it by resizing and standardizing the input. The pre-trained CNN model then classifies the image, providing a prediction and confidence score for each category (e.g., Excellence, Good, Bad). Feedback Delivery: The classification results are displayed immediately, allowing students to receive prompt feedback on their work. This feedback can help students identify areas for improvement and refine their photography skills iteratively. The M-Stock web application includes 11 pages: one homepage and ten assignment pages. Each assignment page corresponds to a specific photography lesson, where students can view sample photographs and submit their own work for evaluation. The web application’s architecture ensures that it can scale to accommodate larger datasets and more complex assignments as the photography curriculum evolves. The Assignments page’s user interface is depicted in Figure 6.

Overall, the M-Stock system combines the power of CNN-based image classification with a tailored feedback mechanism to support student learning in photography. Through its combination of technical rigor, creative assessment, and real-time feedback, M-Stock offers a novel solution for enhancing photography education in university settings. This method ensures that students receive immediate, meaningful feedback on their work, fostering continuous improvement and skill development in both technical and artistic aspects of photography.

4. Evaluation And Results

The M-Stock system was evaluated based on its classification accuracy, prediction speed, and training time, along with additional metrics such as precision, recall, and F1 score to provide a comprehensive assessment. Furthermore, a pilot study was conducted with students to gather qualitative feedback on their learning experience with M-Stock. This section presents the experimental setup, results, and analysis, demonstrating M-Stock’s efficacy in supporting photography education.

4.1. Experimental Setup

The M-Stock system was tested in a virtualized server environment using VMware ESXi [23], running Windows Server 2016 [24] with an 8-core CPU (2.10 GHz) and 16 GB of RAM. The dataset for training and testing included 4,616 student images from various photography assignments and 244 Shutterstock images. and the necessary software tools, including Python 3.11.0, Keras with TensorFlow 2.13.0, and Streamlit 1.31.0 for web deployment. The dataset was divided into an 80% training set and a 20% test set, ensuring a robust model capable of handling diverse image categories. The system’s scalability and performance were also evaluated under different image resolutions and batch sizes. Additionally, a small-scale pilot study with 30 students was conducted to assess the impact of M-Stock’s feedback on learning outcomes.

4.2. Model Performance

The M-Stock system was evaluated for its ability to accurately classify student photography submissions across various assignment types, including technical and creative tasks. To assess the model’s effectiveness, we measured several key metrics: accuracy, precision, recall, and F1 score for each assignment type. These metrics provide a comprehensive view of the model’s classification performance, highlighting its strengths in technical precision and adaptability to different photography genres. The results are presented in Table 1

Table 1: Performance of each photo model for the M-Stock System

| Photo Model | Accuracy (%) |

Precision | Recall | F1-Score | Training Time (min.) | Prediction Speed (ms) |

| Fast Shutter Speed | 96.72 | 0.96 | 0.95 | 0.96 | 28.7 | 47.1 |

| Long Shutter Speed | 96.93 | 0.97 | 0.96 | 0.97 | 39.1 | 46.7 |

| Night Light Photography | 98.36 | 0.98 | 0.97 | 0.98 | 45.5 | 50.6 |

| Composition and Subject | 98.77 | 0.99 | 0.98 | 0.99 | 70.4 | 43.7 |

| Aperture, Depth of Field | 95.33 | 0.95 | 0.94 | 0.95 | 14.7 | 47.9 |

| Light and Shadow | 95.47 | 0.94 | 0.95 | 0.94 | 35.4 | 45.3 |

| Portrait Photography | 99.53 | 0.99 | 0.99 | 0.99 | 119.2 | 43.4 |

| Moving Subject | 97.54 | 0.97 | 0.96 | 0.96 | 16.6 | 46.5 |

| Product Photography | 96.73 | 0.96 | 0.95 | 0.96 | 14.3 | 44.7 |

| Shutterstock Project | 96.39 | 0.95 | 0.94 | 0.94 | 20.3 | 45.2 |

| Total (Average) | 97.18 | 0.97 | 0.96 | 0.96 | 40.4 | 46.1 |

Accuracy: The system achieved an overall accuracy of 97.18%, with individual assignment accuracies ranging from 95.33% for Aperture, Depth of Field to 99.53% for Portrait Photography. The high accuracy demonstrates M-Stock’s ability to consistently classify images across diverse photography tasks, from technical skills (e.g., Long Shutter Speed) to more composition-focused assignments (e.g., Composition and Subject). High accuracy in these varied tasks indicates that M-Stock can generalize well across different photographic techniques and styles, making it adaptable to a comprehensive photography curriculum. In addition to accuracy, we calculated precision, recall, and F1 scores for each assignment type to gain insights into M-Stock’s classification reliability: Precision: High precision values (average of 0.97) indicate that M-Stock has a low rate of false positives, meaning it rarely misclassifies lower-quality images as higher quality. This is crucial in an educational context where students need accurate feedback to understand areas requiring improvement. Recall: The average recall of 0.96 shows M-Stock’s effectiveness in identifying all images that meet specific quality criteria. High recall is especially important for technical assignments, as it ensures that the system accurately identifies images with correct exposure, composition, and other technical parameters. F1 Score: With an average F1 score of 0.97, M-Stock demonstrates a balanced performance in both identifying correct classifications and avoiding misclassifications. This score, the harmonic mean of precision and recall, confirms that the system provides reliable feedback, balancing sensitivity and specificity. The average prediction speed of 46.1 milliseconds per image shows that M-Stock provides rapid feedback, which is essential in real-time educational environments where students submit images and expect prompt responses. This quick feedback loop enables students to immediately identify mistakes and make improvements, reinforcing the learning process. The system’s training time varies based on assignment type, with more complex tasks such as Portrait Photography taking longer (119.2 minutes) due to the intricate analysis required.

M-Stock’s classification performance metrics demonstrate its effectiveness in providing real-time feedback across a wide range of photographic techniques. By maintaining high accuracy, precision, and recall across both technical and creative assignments, the system supports educators in delivering consistent, objective feedback to students. This capability is particularly beneficial in large classes, where individualized feedback is challenging to provide manually. With M-Stock, students can receive accurate, actionable feedback that promotes self-directed learning and skill refinement. Overall, M-Stock’s classification performance confirms its suitability as a comprehensive educational tool, capable of assessing diverse photography tasks with high accuracy and efficiency. Future enhancements may involve refining these classification models further to increase precision and recall in more subjective creative categories, aligning with the evolving needs of photography education.

4.3. Scalability and Runtime Performance

The scalability of the M-Stock system was tested across various image resolutions and batch sizes to evaluate its capacity for handling large volumes of submissions in real-time classroom settings. Scalability is essential in educational applications where a high number of images may be submitted simultaneously, especially in large classes. The system was assessed under four different image resolutions—640×480 (low), 1280×720 (HD), 1920×1080 (Full HD), and 3840×2160 (4K)—to analyse the effect of image size on prediction speed and accuracy. For each image resolution, we measured the average prediction speed, batch processing time, and accuracy to determine the system’s efficiency and robustness under increasing data sizes. Table 2 below illustrates these findings:

Table 2: Different Image Sizes Experiment Results

| Image Size | Prediction Speed (ms) | Processing Time (Sec) | Acc. (%) |

| 640 x 480 | 39.5 | 2.1 | 96.3 |

| 1280 x 720 | 46.1 | 2.5 | 97.2 |

| 1920 x 1080 | 52.4 | 3.2 | 97.8 |

| 3840 x 2160 | 74.6 | 5.4 | 98.1 |

These results show that the system maintains high accuracy across all resolutions, with a minimal decrease in prediction speed as image size increases. For low and HD resolutions, prediction times are under 50 milliseconds, allowing near-instantaneous feedback in real-time applications. Full HD and 4K images take slightly longer to process, but the prediction speeds are still well within acceptable limits for classroom use, ensuring efficient operation even for high-quality images. The accuracy remains high across resolutions, demonstrating that M-Stock’s performance does not degrade with larger image sizes. The system’s batch processing ability was evaluated to simulate high-demand situations where multiple students submit images simultaneously. We processed batches of 50 images at different resolutions, recording the total processing time required. M-Stock handled batch submissions with only a slight increase in processing time for higher-resolution images, completing a 50-image batch in approximately 2.1 seconds at low resolution and 5.4 seconds at 4K. This capability indicates that M-Stock is well-suited to handle real-time feedback needs in large classes, where simultaneous submissions are common.

4.4. User Satisfaction

To assess user satisfaction with the M-Stock system, a survey was conducted among 30 students and 5 instructors during the pilot study. The survey evaluated four key dimensions: ease of use, feedback clarity, perceived usefulness, and overall experience. Participants rated each dimension on a Likert scale from 1 (strongly disagree) to 5 (strongly agree). The results are summarized in Table 3.

Table 3: User Satisfaction Survey Results

| Dimension | Average | SD | Agreement (Score ≥ 4) |

| Ease of Use | 4.7 | 0.3 | 93% |

| Feedback Clarity | 4.5 | 0.4 | 87% |

| Perceived Usefulness | 4.6 | 0.5 | 90% |

| Overall Experience | 4.6 | 0.3 | 92% |

The survey results indicate high levels of satisfaction across all dimensions. Students found the system’s interface intuitive and straightforward, with an average score of 4.7 for ease of use. Feedback clarity received an average score of 4.5, reflecting the comprehensibility of the AI-generated evaluations. The system’s ability to enhance photography skills was rated 4.6 on average, indicating its perceived effectiveness in promoting self-directed learning. Overall, users rated their experience with the system highly, with an average score of 4.6 and 92% agreement. Qualitative responses also highlighted specific benefits, such as the speed of feedback delivery and the ability to focus on iterative improvement. Some suggestions for enhancement included adding more nuanced assessments of creative aspects, such as artistic style and emotional impact. The results demonstrate that M-Stock effectively supports both teaching and learning objectives, providing a user-friendly, impactful solution for photography education.

Students also reported appreciating the quick turnaround time of feedback, which allowed them to adjust in near real-time. These findings suggest that M-Stock’s formative feedback supports continuous learning, enhancing students’ technical and creative skills. The results show that the M-Stock system performed exceptionally well in both educational and commercial contexts. The high accuracy rates across all categories demonstrate that the CNN model is capable of handling diverse photographic styles and quality levels. The relatively low prediction speed of 46.1 milliseconds per image allows the system to provide immediate feedback, which is crucial for enhancing the learning experience in photography courses. The results of the photo quality assessments for each assignment are displayed in Figure 7.

The portrait photography model, which achieved the highest accuracy (99.53%), required the longest training time (119.2 minutes). This indicates that more complex assignments, which involve intricate features such as lighting and composition in portrait photography, require more computational resources to train effectively. However, once trained, the model can classify images quickly and accurately. In contrast, simpler assignments, such as Product Photography and Moving Subject, required significantly less training time but still achieved high accuracy, indicating that the model can generalize well across different photography styles. The Shutterstock project data also yielded strong results, with an accuracy of 96.39%. This indicates that the system can meet industry standards for evaluating commercial photography, providing feedback that aligns with professional evaluation criteria. The results of the photo quality assessments for the Shutterstock project are presented in Figure 8.

The evaluation results indicate that M-Stock performs reliably across technical metrics, while the pilot study confirms its positive impact on student learning. The high accuracy, coupled with quick feedback delivery, underscores M-Stock’s suitability for real-time educational applications. The scalability tests further demonstrate that the system is robust enough to handle diverse classroom environments with high submission volumes. Overall, M-Stock provides a comprehensive assessment experience for photography students, offering both technical precision and creative guidance. Future work may explore expanding the system’s feedback capabilities to include more nuanced assessments of creative elements, potentially incorporating reinforcement learning techniques to adapt feedback based on individual student progress.

9. Conclusion

The M-Stock system represents a significant advancement in photography education by leveraging the power of artificial intelligence to provide automated, real-time feedback on both technical and creative aspects of student submissions. By utilizing Convolutional Neural Networks (CNNs), the system achieved high accuracy (97.18%) and rapid prediction speeds (46.1 milliseconds per image), making it a reliable and scalable solution for dynamic classroom environments. Through a combination of quantitative evaluations and qualitative user feedback, the study demonstrated that M-Stock effectively enhances student learning experiences. Students reported improvements in their technical skills, self-directed learning, and overall engagement, while instructors appreciated the system’s ability to maintain consistent evaluation standards across large class sizes. The system’s ease of use and comprehensive feedback mechanisms make it a valuable tool for fostering continuous learning and skill development in photography courses.

Despite these achievements, challenges remain in assessing highly subjective creative elements, such as artistic style and emotional impact. Future iterations of M-Stock should incorporate advanced techniques, such as reinforcement learning or generative models, to provide deeper insights into these aspects. Additionally, expanding the platform to support other creative disciplines, such as graphic design and visual arts, could broaden its applicability and impact. In conclusion, M-Stock exemplifies how AI can transform education by addressing key limitations of traditional assessment methods. By combining technical rigor with creative evaluation, the system not only meets the evolving needs of photography education but also sets the stage for broader applications of AI in creative and technical learning environments.

Conflict of Interest

The authors declare no conflict of interest.

Acknowledgment

The web server used in this study M-Stock, AI-Based Photography Assessment System using Convolutional Neural Networks was supported by the Center of Excellence in Artificial Intelligence and Emerging Technologies, School of Applied Digital Technology, Mae Fah Luang University.

- M. Le´on, R. Bello, K. Vanhoof, “Cognitive Maps in Transport Behavior,” in 2009 Eighth Mexican International Conference on Artificial Intelligence, 179–184, IEEE, 2009, doi:10.1109/MICAI.2009.31.

- M. Leon, L. Mkrtchyan, B. Depaire, D. Ruan, K. Vanhoof, “Learning and clustering of fuzzy cognitive maps for travel behaviour analysis,” Knowledge and Information Systems, 39(2), 435–462, 2013, doi:10.1007/s10115-013-0616-z.

- M. Le´on, “Fuzzy Cognitive Maps as a Bridge Between Symbolic and Sub- Symbolic Artificial Intelligence,” International Journal on Cybernetics & Informatics, 13(4), 57–75, 2024, doi:10.5121/ijci.2024.13405.

- M. Leon, “Aggregating Procedure for Fuzzy Cognitive Maps,” The International FLAIRS Conference Proceedings, 36(1), 2023, doi:10.32473/flairs.36.133082.

- A. Ghimire, J. Prather, J. Edwards, “Generative AI in Education: A Study of Educators’ Awareness, Sentiments, and Influencing Factors,” 2024, doi:10.48550/ARXIV.2403.15586.

- M. Le´on, N. M. S´anchez, Z. Z. Garc´ıa, R. B. P´erez, “Concept Maps Combined with Case-Based Reasoning in Order to Elaborate Intelligent Teaching/ Learning Systems,” in Seventh International Conference on Intelligent Systems Design and Applications (ISDA 2007), 205–210, IEEE, 2007, doi:10.1109/ISDA.2007.11.

- M. Le´on, G. N´apoles, R. Bello, L. Mkrtchyan, B. Depaire, K. Vanhoof, “Tackling Travel Behaviour: An Approach Based on Fuzzy Cognitive Maps,” International Journal of Computational Intelligence Systems, 6(6), 1012–1039, 2013, doi:10.1080/18756891.2013.816025.

- M. Le´on, “Comparing LLMs Using a Unified Performance Ranking System,” 2024, doi:10.5121/ijcsit.2023.15103.

- M. Le´on, H. DeSimone, “Advancements in Explainable Artificial Intelligence for Enhanced Transparency and Interpretability across Business Applications,” Advances in Science, Technology and Engineering Systems Journal, 9(5), 9–20, 2024, doi:10.25046/aj090502.

- M. Le´on, “Toward the Application of the Problem-Based Learning Paradigm into the Instruction of Business Technology and Innovation,”

International Journal of Learning and Teaching, 10(5), 571–575, 2024, doi:10.18178/ijlt.10.5.571-575. - H. DeSimone, M. Le´on, “Leveraging Explainable AI in Business and Further,” in 3rd IEEE Opportunity Research Scholars Symposium, 2024,

doi:10.1109/ORSS.2024.1234567. - M. Le´on, “Harnessing Fuzzy Cognitive Maps for Advancing AI with Hybrid Interpretability and Learning Solutions,” Advanced Computing: An International Journal, 15(5), 1–23, 2024, doi:10.5121/acij.2024.150501.

- M. Le´on, “Generative AI as a New Paradigm for Personalized Tutoring in Modern Education,” International Journal on Integrating Technology in Education, 13(3), 49–63, 2024, doi:10.5121/ijite.2024.13304.

- M. Le´on, “Benchmarking Large Language Models with a Unified Performance Ranking Metric,” International Journal on Foundations of Computer Science & Technology, 14(4), 15–27, 2024, doi:10.5121/ijfcst.2024.14402.

- M. Le´on, “The Needed Bridge Connecting Symbolic and Sub-Symbolic AI,” International Journal of Computer Science, Engineering and Information Technology, 14(1), 1–19, 2024, doi:10.5121/ijcseit.2024.14101.

- M. Le´on, “Leveraging Generative AI for On-Demand Tutoring as a New Paradigm in Education,” International Journal on Cybernetics & Informatics, 13(5), 17–29, 2024, doi:10.5121/ijci.2024.13502.

- M. Le´on, G. N´apoles, C. Rodr´ıguez, M. M. Garc´ıa, R. Bello, K. Vanhoof, “A Fuzzy Cognitive Maps Modeling, Learning and Simulation Framework for Studying Complex System,” in New Challenges on Bioinspired Applications: 4th International Work-conference on the Interplay Between Natural and Artificial Computation (IWINAC 2011), 243–256, Springer Berlin Heidelberg, 2011, doi:10.1007/978-3-642-21326-7 27.

- G. Nopoles, M. L. Espinosa, I. Grau, K. Vanhoof, R. Bello, Fuzzy cognitive maps based models for pattern classification: Advances and challenges, volume 360, 83–98, Springer Verlag, 2018.

- M. Le´on, L. Mkrtchyan, B. Depaire, D. Ruan, R. Bello, K. Vanhoof, “Learning Method Inspired on Swarm Intelligence for Fuzzy Cognitive Maps: Travel Behaviour Modelling,” in Artificial Neural Networks and Machine Learning– ICANN 2012: 22nd International Conference on Artificial Neural Networks, Lausanne, Switzerland, September 11-14, 2012, Proceedings, Part I, 718–725, Springer Berlin Heidelberg, 2012, doi:10.1007/978-3-642-33269-2 90.

- G. N´apoles, Y. Salgueiro, I. Grau, M. Leon, “Recurrence-Aware Long-Term Cognitive Network for Explainable Pattern Classification,” IEEE Transactions on Cybernetics, 53(10), 6083–6094, 2023, doi:10.1109/TCYB.2022.3142284.

- G. N´apoles, M. Leon, I. Grau, K. Vanhoof, “FCM Expert: Software Tool for Scenario Analysis and Pattern Classification Based on Fuzzy Cognitive Maps,” International Journal on Artificial Intelligence Tools, 27(07), 1860010, 2018,

doi:10.1142/S0218213018600102. - M. Le´on, B. Depaire, K. Vanhoof, “Fuzzy Cognitive Maps with Rough Concepts,” in Artificial Intelligence Applications and Innovations: 9th IFIP WG 12.5 International Conference, AIAI 2013, Paphos, Cyprus, September 30– October 2, 2013, Proceedings, 527–536, Springer Berlin Heidelberg, 2013, doi:10.1007/978-3-642-41142-7 53.

- F. Hoitsma, A. Knoben, M. Le´on, G. N´apoles, “Symbolic Explanation Module for Fuzzy Cognitive Map-Based Reasoning Models,” in Artificial Intelligence XXXVII: 40th SGAI International Conference on Artificial Intelligence, AI 2020, Cambridge, UK, December 15–17, 2020, Proceedings, 21–34, Springer International Publishing, 2020, doi:10.1007/978-3-030-63799-6 3.

- M. Le´on, G. N´apoles, M. M. Garc´ıa, R. Bello, K. Vanhoof, “A Revision and Experience Using Cognitive Mapping and Knowledge Engineering in Travel Behavior Sciences,” Polibits, (42), 43–50, 2010, doi:10.17562/PB-42-6.

- H. DeSimone, M. Leon, “Explainable AI: The Quest for Transparency in Business and Beyond,” in 2024 7th International Conference

on Information and Computer Technologies (ICICT), IEEE, 2024, doi:10.1109/icict62343.2024.00093. - M. Alier, F.-J. Garc´ıa-Pe˜nalvo, J. D. Camba, “Generative Artificial Intelligence in Education: From Deceptive to Disruptive,” International Journal of Interactive Multimedia and Artificial Intelligence, 8(5), 5, 2024, doi:10.9781/ijimai.2024.02.011.

- J. Su,W. Yang, “Unlocking the Power of ChatGPT: A Framework for Applying Generative AI in Education,” ECNU Review of Education, 6(3), 355–366, 2023, doi:10.1177/20965311231168423.

- M. Leon, “Business Technology and Innovation Through Problem-Based Learning,” in Canada International Conference on Education (CICE-2023) andWorld Congress on Education (WCE-2023), CICE-2023, Infonomics Society, 2023, doi:10.20533/cice.2023.0034.

- H. Wang, A. Tlili, R. Huang, Z. Cai, M. Li, Z. Cheng, D. Yang, M. Li, X. Zhu, C. Fei, “Examining the applications of intelligent tutoring systems in real educational contexts: A systematic literature review from the social experiment perspective,” Education and Information Technologies, 28(7), 9113–9148, 2023, doi:10.1007/s10639-022-11555-x.

- E. A. Alasadi, C. R. Baiz, “Generative AI in Education and Research: Opportunities, Concerns, and Solutions,” Journal of Chemical Education, 100(8), 2965–2971, 2023, doi:10.1021/acs.jchemed.3c00323.

- D. BA˙IDOO-ANU, L. OWUSU ANSAH, “Education in the Era of Generative Artificial Intelligence (AI): Understanding the Potential Benefits of Chat- GPT in Promoting Teaching and Learning,” Journal of AI, 7(1), 52–62, 2023, doi:10.61969/jai.1337500.

- E. Struble, M. Leon, E. Skordilis, “Intelligent Prevention of DDoS Attacks using Reinforcement Learning and Smart Contracts,” The International FLAIRS Conference Proceedings, 37(1), 2024, doi:10.32473/flairs.37.1.135349.

- X. Zhai, X. Chu, C. S. Chai, M. S. Y. Jong, A. Istenic, M. Spector, J.-B. Liu, J. Yuan, Y. Li, “A Review of Artificial Intelligence (AI) in Education from 2010 to 2020,” Complexity, 2021, 1–18, 2021, doi:10.1155/2021/8812542.

- C.-C. Lin, A. Y. Q. Huang, O. H. T. Lu, “Artificial intelligence in intelligent tutoring systems toward sustainable education: a systematic review,” Smart Learning Environments, 10(1), 2023, doi:10.1186/s40561-023-00260-y.

- W. Holmes, K. Porayska-Pomsta, K. Holstein, E. Sutherland, T. Baker, S. B. Shum, O. C. Santos, M. T. Rodrigo, M. Cukurova, I. I. Bittencourt, K. R. Koedinger, “Ethics of AI in Education: Towards a Community-Wide Framework,” International Journal of Artificial Intelligence in Education, 32(3), 504–526, 2021, doi:10.1007/s40593-021-00239-1.

- K. Zhang, A. B. Aslan, “AI technologies for education: Recent research & future directions,” Computers and Education: Artificial Intelligence, 2, 100025, 2021, doi:10.1016/j.caeai.2021.100025.

- L. Chen, P. Chen, Z. Lin, “Artificial Intelligence in Education: A Review,” IEEE Access, 8, 75264–75278, 2020, doi:10.1109/ACCESS.2020.2988510.

- G. N´apoles, I. Grau, R. Bello, M. Le´on, K. Vanhoof, E. Papageorgiou, “A Computational Tool for Simulation and Learning of Fuzzy Cognitive Maps,” in 2015 IEEE International Conference on Fuzzy Systems (FUZZ-IEEE), 1–8, IEEE, 2015, doi:10.1109/FUZZ-IEEE.2015.7338005.

- G. N´apoles, J. L. Salmeron, W. Froelich, R. Falcon, M. Leon, F. Vanhoenshoven, R. Bello, K. Vanhoof, Fuzzy Cognitive Modeling: Theoretical and Practical Considerations, 77–87, Springer Singapore, 2019, doi:10.1007/978- 981-13-8311-3 7.

- M. Le´on, “The Escalating AI’s Energy Demands and the Imperative Need for Sustainable Solutions,” WSEAS Transactions on Systems, 23, 444–457, 2024, doi:10.37394/23202.2024.23.46.

- G. N´apoles, F. Hoitsma, A. Knoben, A. Jastrzebska, M. Leon, “Prolog-based agnostic explanation module for structured pattern classification,” Information Sciences, 622, 1196–1227, 2023, doi:10.1016/j.ins.2022.12.012.

- Kohinur Parvin, Eshat Ahmad Shuvo, Wali Ashraf Khan, Sakibul Alam Adib, Tahmina Akter Eiti, Mohammad Shovon, Shoeb Akter Nafiz, "Computationally Efficient Explainable AI Framework for Skin Cancer Detection", Advances in Science, Technology and Engineering Systems Journal, vol. 11, no. 1, pp. 11–24, 2026. doi: 10.25046/aj110102

- Jenna Snead, Nisa Soltani, Mia Wang, Joe Carson, Bailey Williamson, Kevin Gainey, Stanley McAfee, Qian Zhang, "3D Facial Feature Tracking with Multimodal Depth Fusion", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 5, pp. 11–19, 2025. doi: 10.25046/aj100502

- François Dieudonné Mengue, Verlaine Rostand Nwokam, Alain Soup Tewa Kammogne, René Yamapi, Moskolai Ngossaha Justin, Bowong Tsakou Samuel, Bernard Kamsu Fogue, "Explainable AI and Active Learning for Photovoltaic System Fault Detection: A Bibliometric Study and Future Directions", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 3, pp. 29–44, 2025. doi: 10.25046/aj100305

- Joshua Carberry, Haiping Xu, "GPT-Enhanced Hierarchical Deep Learning Model for Automated ICD Coding", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 4, pp. 21–34, 2024. doi: 10.25046/aj090404

- Nguyen Viet Hung, Tran Thanh Lam, Tran Thanh Binh, Alan Marshal, Truong Thu Huong, "Efficient Deep Learning-Based Viewport Estimation for 360-Degree Video Streaming", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 49–61, 2024. doi: 10.25046/aj090305

- Sami Florent Palm, Sianou Ezéckie Houénafa, Zourkalaïni Boubakar, Sebastian Waita, Thomas Nyachoti Nyangonda, Ahmed Chebak, "Solar Photovoltaic Power Output Forecasting using Deep Learning Models: A Case Study of Zagtouli PV Power Plant", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 41–48, 2024. doi: 10.25046/aj090304

- Henry Toal, Michelle Wilber, Getu Hailu, Arghya Kusum Das, "Evaluation of Various Deep Learning Models for Short-Term Solar Forecasting in the Arctic using a Distributed Sensor Network", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 12–28, 2024. doi: 10.25046/aj090302

- Toya Acharya, Annamalai Annamalai, Mohamed F Chouikha, "Optimizing the Performance of Network Anomaly Detection Using Bidirectional Long Short-Term Memory (Bi-LSTM) and Over-sampling for Imbalance Network Traffic Data", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 6, pp. 144–154, 2023. doi: 10.25046/aj080614

- Nizar Sakli, Chokri Baccouch, Hedia Bellali, Ahmed Zouinkhi, Mustapha Najjari, "IoT System and Deep Learning Model to Predict Cardiovascular Disease Based on ECG Signal", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 6, pp. 08–18, 2023. doi: 10.25046/aj080602

- Zobeda Hatif Naji Al-azzwi, Alexey N. Nazarov, "MRI Semantic Segmentation based on Optimize V-net with 2D Attention", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 4, pp. 73–80, 2023. doi: 10.25046/aj080409

- Kohei Okawa, Felix Jimenez, Shuichi Akizuki, Tomohiro Yoshikawa, "Investigating the Impression Effects of a Teacher-Type Robot Equipped a Perplexion Estimation Method on College Students", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 4, pp. 28–35, 2023. doi: 10.25046/aj080404

- Yu-Jin An, Ha-Young Oh, Hyun-Jong Kim, "Forecasting Bitcoin Prices: An LSTM Deep-Learning Approach Using On-Chain Data", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 3, pp. 186–192, 2023. doi: 10.25046/aj080321

- Ivana Marin, Sven Gotovac, Vladan Papić, "Development and Analysis of Models for Detection of Olive Trees", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 2, pp. 87–96, 2023. doi: 10.25046/aj080210

- Víctor Manuel Bátiz Beltrán, Ramón Zatarain Cabada, María Lucía Barrón Estrada, Héctor Manuel Cárdenas López, Hugo Jair Escalante, "A Multiplatform Application for Automatic Recognition of Personality Traits in Learning Environments", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 2, pp. 30–37, 2023. doi: 10.25046/aj080204

- Anh-Thu Mai, Duc-Huy Nguyen, Thanh-Tin Dang, "Transfer and Ensemble Learning in Real-time Accurate Age and Age-group Estimation", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 6, pp. 262–268, 2022. doi: 10.25046/aj070630

- Fatima-Zahra Elbouni, Aziza EL Ouaazizi, "Birds Images Prediction with Watson Visual Recognition Services from IBM-Cloud and Conventional Neural Network", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 6, pp. 181–188, 2022. doi: 10.25046/aj070619

- Bahram Ismailov Israfil, "Deep Learning in Monitoring the Behavior of Complex Technical Systems", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 5, pp. 10–16, 2022. doi: 10.25046/aj070502

- Nosiri Onyebuchi Chikezie, Umanah Cyril Femi, Okechukwu Olivia Ozioma, Ajayi Emmanuel Oluwatomisin, Akwiwu-Uzoma Chukwuebuka, Njoku Elvis Onyekachi, Gbenga Christopher Kalejaiye, "BER Performance Evaluation Using Deep Learning Algorithm for Joint Source Channel Coding in Wireless Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 4, pp. 127–139, 2022. doi: 10.25046/aj070417

- Tiny du Toit, Hennie Kruger, Lynette Drevin, Nicolaas Maree, "Deep Learning Affective Computing to Elicit Sentiment Towards Information Security Policies", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 3, pp. 152–160, 2022. doi: 10.25046/aj070317

- Kaito Echizenya, Kazuhiro Kondo, "Indoor Position and Movement Direction Estimation System Using DNN on BLE Beacon RSSI Fingerprints", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 3, pp. 129–138, 2022. doi: 10.25046/aj070315

- Jayan Kant Duggal, Mohamed El-Sharkawy, "High Performance SqueezeNext: Real time deployment on Bluebox 2.0 by NXP", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 3, pp. 70–81, 2022. doi: 10.25046/aj070308

- Sreela Sreekumaran Pillai Remadevi Amma, Sumam Mary Idicula, "A Unified Visual Saliency Model for Automatic Image Description Generation for General and Medical Images", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 2, pp. 119–126, 2022. doi: 10.25046/aj070211

- Seok-Jun Bu, Hae-Jung Kim, "Ensemble Learning of Deep URL Features based on Convolutional Neural Network for Phishing Attack Detection", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 291–296, 2021. doi: 10.25046/aj060532

- Osaretin Eboya, Julia Binti Juremi, "iDRP Framework: An Intelligent Malware Exploration Framework for Big Data and Internet of Things (IoT) Ecosystem", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 185–202, 2021. doi: 10.25046/aj060521

- Baida Ouafae, Louzar Oumaima, Ramdi Mariam, Lyhyaoui Abdelouahid, "Survey on Novelty Detection using Machine Learning Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 73–82, 2021. doi: 10.25046/aj060510

- Fatima-Ezzahra Lagrari, Youssfi Elkettani, "Traditional and Deep Learning Approaches for Sentiment Analysis: A Survey", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 01–07, 2021. doi: 10.25046/aj060501

- Anjali Banga, Pradeep Kumar Bhatia, "Optimized Component based Selection using LSTM Model by Integrating Hybrid MVO-PSO Soft Computing Technique", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 4, pp. 62–71, 2021. doi: 10.25046/aj060408

- Nadia Jmour, Slim Masmoudi, Afef Abdelkrim, "A New Video Based Emotions Analysis System (VEMOS): An Efficient Solution Compared to iMotions Affectiva Analysis Software", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 990–1001, 2021. doi: 10.25046/aj0602114

- Bakhtyar Ahmed Mohammed, Muzhir Shaban Al-Ani, "Follow-up and Diagnose COVID-19 Using Deep Learning Technique", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 971–976, 2021. doi: 10.25046/aj0602111

- Showkat Ahmad Dar, S Palanivel, "Performance Evaluation of Convolutional Neural Networks (CNNs) And VGG on Real Time Face Recognition System", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 956–964, 2021. doi: 10.25046/aj0602109

- Kenza Aitelkadi, Hicham Outmghoust, Salahddine laarab, Kaltoum Moumayiz, Imane Sebari, "Detection and Counting of Fruit Trees from RGB UAV Images by Convolutional Neural Networks Approach", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 887–893, 2021. doi: 10.25046/aj0602101

- Abraham Adiputra Wijaya, Inten Yasmina, Amalia Zahra, "Indonesian Music Emotion Recognition Based on Audio with Deep Learning Approach", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 716–721, 2021. doi: 10.25046/aj060283

- Prasham Shah, Mohamed El-Sharkawy, "A-MnasNet and Image Classification on NXP Bluebox 2.0", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 1378–1383, 2021. doi: 10.25046/aj0601157

- Byeongwoo Kim, Jongkyu Lee, "Fault Diagnosis and Noise Robustness Comparison of Rotating Machinery using CWT and CNN", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 1279–1285, 2021. doi: 10.25046/aj0601146

- Alisson Steffens Henrique, Anita Maria da Rocha Fernandes, Rodrigo Lyra, Valderi Reis Quietinho Leithardt, Sérgio D. Correia, Paul Crocker, Rudimar Luis Scaranto Dazzi, "Classifying Garments from Fashion-MNIST Dataset Through CNNs", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 989–994, 2021. doi: 10.25046/aj0601109

- Reem Bayari, Ameur Bensefia, "Text Mining Techniques for Cyberbullying Detection: State of the Art", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 783–790, 2021. doi: 10.25046/aj060187

- Imane Jebli, Fatima-Zahra Belouadha, Mohammed Issam Kabbaj, Amine Tilioua, "Deep Learning based Models for Solar Energy Prediction", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 349–355, 2021. doi: 10.25046/aj060140

- Xianxian Luo, Songya Xu, Hong Yan, "Application of Deep Belief Network in Forest Type Identification using Hyperspectral Data", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 1554–1559, 2020. doi: 10.25046/aj0506186

- Lubna Abdelkareim Gabralla, "Dense Deep Neural Network Architecture for Keystroke Dynamics Authentication in Mobile Phone", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 307–314, 2020. doi: 10.25046/aj050637

- Helen Kottarathil Joy, Manjunath Ramachandra Kounte, "A Comprehensive Review of Traditional Video Processing", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 274–279, 2020. doi: 10.25046/aj050633

- Andrea Generosi, Silvia Ceccacci, Samuele Faggiano, Luca Giraldi, Maura Mengoni, "A Toolkit for the Automatic Analysis of Human Behavior in HCI Applications in the Wild", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 185–192, 2020. doi: 10.25046/aj050622

- Pamela Zontone, Antonio Affanni, Riccardo Bernardini, Leonida Del Linz, Alessandro Piras, Roberto Rinaldo, "Supervised Learning Techniques for Stress Detection in Car Drivers", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 22–29, 2020. doi: 10.25046/aj050603

- Sherif H. ElGohary, Aya Lithy, Shefaa Khamis, Aya Ali, Aya Alaa el-din, Hager Abd El-Azim, "Interactive Virtual Rehabilitation for Aphasic Arabic-Speaking Patients", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 1225–1232, 2020. doi: 10.25046/aj0505148

- Daniyar Nurseitov, Kairat Bostanbekov, Maksat Kanatov, Anel Alimova, Abdelrahman Abdallah, Galymzhan Abdimanap, "Classification of Handwritten Names of Cities and Handwritten Text Recognition using Various Deep Learning Models", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 934–943, 2020. doi: 10.25046/aj0505114

- Khalid A. AlAfandy, Hicham Omara, Mohamed Lazaar, Mohammed Al Achhab, "Using Classic Networks for Classifying Remote Sensing Images: Comparative Study", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 770–780, 2020. doi: 10.25046/aj050594

- Khalid A. AlAfandy, Hicham, Mohamed Lazaar, Mohammed Al Achhab, "Investment of Classic Deep CNNs and SVM for Classifying Remote Sensing Images", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 652–659, 2020. doi: 10.25046/aj050580

- Chigozie Enyinna Nwankpa, "Advances in Optimisation Algorithms and Techniques for Deep Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 563–577, 2020. doi: 10.25046/aj050570

- Sathyabama Kaliyapillai, Saruladha Krishnamurthy, "Differential Evolution based Hyperparameters Tuned Deep Learning Models for Disease Diagnosis and Classification", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 253–261, 2020. doi: 10.25046/aj050531

- Hicham Moujahid, Bouchaib Cherradi, Oussama El Gannour, Lhoussain Bahatti, Oumaima Terrada, Soufiane Hamida, "Convolutional Neural Network Based Classification of Patients with Pneumonia using X-ray Lung Images", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 167–175, 2020. doi: 10.25046/aj050522

- Nghia Duong-Trung, Luyl-Da Quach, Chi-Ngon Nguyen, "Towards Classification of Shrimp Diseases Using Transferred Convolutional Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 724–732, 2020. doi: 10.25046/aj050486

- Tran Thanh Dien, Nguyen Thanh-Hai, Nguyen Thai-Nghe, "Deep Learning Approach for Automatic Topic Classification in an Online Submission System", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 700–709, 2020. doi: 10.25046/aj050483

- Maroua Abdellaoui, Dounia Daghouj, Mohammed Fattah, Younes Balboul, Said Mazer, Moulhime El Bekkali, "Artificial Intelligence Approach for Target Classification: A State of the Art", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 445–456, 2020. doi: 10.25046/aj050453

- Roberta Avanzato, Francesco Beritelli, "A CNN-based Differential Image Processing Approach for Rainfall Classification", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 438–444, 2020. doi: 10.25046/aj050452

- Samir Allach, Mohamed Ben Ahmed, Anouar Abdelhakim Boudhir, "Deep Learning Model for A Driver Assistance System to Increase Visibility on A Foggy Road", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 314–322, 2020. doi: 10.25046/aj050437

- Rizki Jaka Maulana, Gede Putra Kusuma, "Malware Classification Based on System Call Sequences Using Deep Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 207–216, 2020. doi: 10.25046/aj050426

- Hai Thanh Nguyen, Nhi Yen Kim Phan, Huong Hoang Luong, Trung Phuoc Le, Nghi Cong Tran, "Efficient Discretization Approaches for Machine Learning Techniques to Improve Disease Classification on Gut Microbiome Composition Data", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 547–556, 2020. doi: 10.25046/aj050368

- Neptali Montañez, Jomari Joseph Barrera, "Automated Abaca Fiber Grade Classification Using Convolution Neural Network (CNN)", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 207–213, 2020. doi: 10.25046/aj050327

- Aditi Haresh Vyas, Mayuri A. Mehta, "A Comprehensive Survey on Image Modality Based Computerized Dry Eye Disease Detection Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 748–756, 2020. doi: 10.25046/aj050293

- Bokyoon Na, Geoffrey C Fox, "Object Classifications by Image Super-Resolution Preprocessing for Convolutional Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 476–483, 2020. doi: 10.25046/aj050261

- Bayan O Al-Amri, Mohammed A. AlZain, Jehad Al-Amri, Mohammed Baz, Mehedi Masud, "A Comprehensive Study of Privacy Preserving Techniques in Cloud Computing Environment", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 419–424, 2020. doi: 10.25046/aj050254

- Lenin G. Falconi, Maria Perez, Wilbert G. Aguilar, Aura Conci, "Transfer Learning and Fine Tuning in Breast Mammogram Abnormalities Classification on CBIS-DDSM Database", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 154–165, 2020. doi: 10.25046/aj050220

- Daihui Li, Chengxu Ma, Shangyou Zeng, "Design of Efficient Convolutional Neural Module Based on An Improved Module", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 1, pp. 340–345, 2020. doi: 10.25046/aj050143

- Andary Dadang Yuliyono, Abba Suganda Girsang, "Artificial Bee Colony-Optimized LSTM for Bitcoin Price Prediction", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 375–383, 2019. doi: 10.25046/aj040549

- Shun Yamamoto, Momoyo Ito, Shin-ichi Ito, Minoru Fukumi, "Verification of the Usefulness of Personal Authentication with Aerial Input Numerals Using Leap Motion", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 369–374, 2019. doi: 10.25046/aj040548

- Kevin Yudi, Suharjito, "Sentiment Analysis of Transjakarta Based on Twitter using Convolutional Neural Network", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 281–286, 2021. doi: 10.25046/aj040535

- Bui Thanh Hung, "Integrating Diacritics Restoration and Question Classification into Vietnamese Question Answering System", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 207–212, 2019. doi: 10.25046/aj040526

- Michael Santacroce, Daniel Koranek, Rashmi Jha, "Detecting Malicious Assembly using Convolutional, Recurrent Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 46–52, 2019. doi: 10.25046/aj040506

- Ajees Arimbassery Pareed, Sumam Mary Idicula, "A Relation Extraction System for Indian Languages", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 2, pp. 65–69, 2019. doi: 10.25046/aj040208

- Marwa Farouk Ibrahim Ibrahim, Adel Ali Al-Jumaily, "Auto-Encoder based Deep Learning for Surface Electromyography Signal Processing", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 1, pp. 94–102, 2018. doi: 10.25046/aj030111

- Sehla Loussaief, Afef Abdelkrim, "Machine Learning framework for image classification", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 1, pp. 1–10, 2018. doi: 10.25046/aj030101

- Marwa Farouk Ibrahim Ibrahim, Adel Ali Al-Jumaily, "Self-Organizing Map based Feature Learning in Bio-Signal Processing", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 3, pp. 505–512, 2017. doi: 10.25046/aj020365

- R. Manju Parkavi, M. Shanthi, M.C. Bhuvaneshwari, "Recent Trends in ELM and MLELM: A review", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 1, pp. 69–75, 2017. doi: 10.25046/aj020108