Indonesian Music Emotion Recognition Based on Audio with Deep Learning Approach

Volume 6, Issue 2, Page No 716–721, 2021

Adv. Sci. Technol. Eng. Syst. J. 6(2), 716–721 (2021);

DOI: 10.25046/aj060283

DOI: 10.25046/aj060283

Keywords: Music Emotion Recognition, Indonesian Music, Deep Learning, Convolutional Neural Network, Recurrent Neural Network

Music Emotion Recognition (MER) is a study to recognize emotion in a music or song. MER is still challenging in the music world since recognizing emotion in music is affected by several features; audio is one of them. This paper uses a deep learning approach for MER, specifically Convolutional Neural Network (CNN) and Convolutional Recurrent Neural Network (CRNN) with 361 Indonesian songs as the dataset. The music is classified into three main emotion groups: positive, neutral, and negative. This paper demonstrates that the best model for MER on Indonesian music is CRNN with the accuracy of 58.33%, outperforming that achieved by CNN.

1. Introduction

Music is one language to express your emotion. By knowing emotion from music, listeners can enjoy music based on their emotional condition. Recognizing emotion from music is also useful for supporting a smart system in the future. One example is for supporting smart cars to help stabilize the driver’s emotions while driving. The driver’s driving condition will be affected by their emotional condition. Positive or negative emotion will affect the risk level, reaction to a particular condition, their action, and driving awareness level [1].

Based on the study in [2], there are 28 emotion variations to indicate human emotion from valence and arousal level. This research focuses on classifying music into three main emotion groups: positive, neutral, and negative. Music Emotion Recognition (MER) has become a new challenging thing in the music world because emotion on a particular song will be conducted by tone, tempo, and lyrics of the song. Deep learning is used to find the best solution for MER. Convolutional Neural Network (CNN) has a great occupation for analyzing audio in MER than using machine learning [3], as well as Convolutional Recurrent Neural Network (CRNN), where it has a great occupation for analyzing audio in MER to classify music into two main emotion groups: positive and negative [4].

A study in [5] found that by using RNN encoding, algorithm can be very intelligent in predicting the emotion inside a music, and even though it cannot explicitly predict the emotion in music, it is useful for selecting music with strong emotion and gives user recommendations. Another experiment conducted by [6] shows that CNN has an advantage on extracting useful features from raw data which would help in emotion recognition. Study conducted by [3] also mentions that CNN is an effective method to predict the emotion of songs using spectrogram. However, there are still things that could be done to improve the model precision. Based on those statements, it was decided to conduct a research in Music Emotion Recognition using CNN and CRNN. Through this research we also found the best parameters to be applied on the CNN and CRNN models that we proposed. We decided to do the experiment specifically on Indonesian music because of a study conducted by [7] found that using data sets from a specific country gives a better performance. It can also help shows the genre trends in that country.

2. Literature Review

This section shows basic knowledge applied to our research, such as emotion model, Music Emotion Recognition (MER) and related works.

2.1. Valence-Arousal Emotion Model

The Valence-Arousal model (V-A model) proposed in this study [2] is mostly used by researchers as an emotion model. Emotion variety introduced in [2] is shown in Figure 1.

Figure 1: 2D Valance-Arousal Emotion Space [2]

Figure 1: 2D Valance-Arousal Emotion Space [2]

Figure 1 explains two-dimensional space which consists of a horizontal line as a valance and a vertical line as arousal. Valence is the affective quality referring to the intrinsic attractiveness/goodness (positive valance) or awareness/badness (negative valance). Arousal is a state of emotional condition that makes us feel motivated or feel the same as our emotional condition.

2.2. Music Emotion Recognition (MER)

In this digital era, music becomes one of the important things in human life. Following music growth in the digital era, MER becomes interesting in the past few years because music is highly related to mood or someone’s emotion.

There is some multimedia system to recognize or obtain emotional information from music as being developed by Moodtrack, MusicSense, Mood Cloud, Moody, and I.MTV [8]. In the study [8], the authors claimed that a machine or computer that can recognize emotion from music can improve the interaction between computer and human being. With this consideration, developing MER is required so that the computer can automatically recognize or classify music based on emotion in that music. Developing MER has become challenging because MER has a variety of emotion conception and emotion association, thus there is a debate in emotion’s concept category in MER. Table 1 shows multidisciplinary from developing MER.

Table 1: Comparison of Existing Work on MER [7]

| Categorical MER | Categorical | Predict the discrete emotion labels |

| Dimensional MER | Dimensional | Predict the numerical emotion values |

| Music Emotion Variation Detection (MEVD) | Dimensional | Predict the continuous emotion variation |

The categorical MER approach categorizes emotion into several classes and applies to machine learning to train a classifier. Dimensional MER approach defines emotions as numerical values from a particular dimension like valance and arousal [2]. MEVD aims to produce music’s prediction for every short-time segment of songs, and it helps to predict more complex emotions.

2.3. Related Works

This paper refers to several studies in MER using machine learning and deep learning with audio features. One study used Million Song Dataset (MSD) [9] that consists of a timbre segment along with audio features[10]. This research focuses on classifying data using 5-fold cross-validation. Support Vector Machine (SVM), Random Forest (RF), k-Nearest Neighbor (k-NN), Multilayer Perceptron (MLP), Logistic Regression (LR), and Naïve Bayes (NV) are used in [10] and it claimed that LR achieved the highest accuracy of 57.93%. The authors in this study [3] claimed that using Convolutional Neural Network (CNN) achieved better accuracy than machine learning. It contains 30,498 spectrograms from 744 songs. Every song supplied 45-second clips and every single clip was transformed into spectrograms. The study shows us that CNN is a better model than machine learning with 72% accuracy [3]. Another study conducted by the authors in [4] used CNN, RNN, and CRNN to solve the MER problem. 48,476 songs from MSD [9] were used as dataset and every song was transformed into Mel-spectrogram. This research focuses on classifying data into two classes: positive and negative. The study shows that using CRNN is better than CNN and RNN with 66% accuracy, where CNN and RNN achieved the accuracy of 64% and 63%, respectively [4].

The study conducted by [5] uses a fusion of antonyms to describe emotions in the context of MER. It was mentioned that tempo and energy were useful features. By using RNN encoding, the algorithm can be very intelligent in predicting the emotion inside a music, and even though it cannot explicitly predict the emotion in music, it is useful for selecting music with strong emotion and gives user recommendations. The study conducted by [6] proposes a novel method that combines original music spectrogram with CNN to predict the emotion tag. They reported that CNN has an advantage on extracting useful features from raw data which would help in emotion recognition but there should be more research conducted on the meaning of CNN outputs. Another study conducted by [3] proposes a method to classify features extracted from the music’s spectrograms using CNN. The study states that CNN is an effective method to predict the emotion of songs using spectrogram. However, there are still things that could be done to improve the model precision. From the studies aforementioned, the research presented in this paper focuses on using CNN and CRNN for classifying our dataset into three main groups.

3. Proposed Method

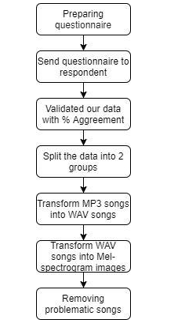

This section discusses how data is collected and pre-processed and showcases our proposed method in this research. The illustration of flow diagram on the research methodology could be found in Figure 2.

Figure 2: Flow Chart of Research Methodology

Figure 2: Flow Chart of Research Methodology

3.1. Data Collection & Pre-processing Data

There are 614 non-vocal audios collected from YouTube. The data is Indonesian music covering various genres; the majority of them is pop music. The decision to use music from a certain country is based on the hypothesis that every country has unique style of music. A research in MER conducted using specifically Korean music as experiment’s dataset is able to achieve good accuracy [7]. After collecting the audio, several questions in the form of a questionnaire were distributed to respondents to help label the data into three labels/emotion groups, i.e., positive, neutral, and negative. Positive is the emotion when the music consists of positive energy, like excited, happy, and pleased. Neutral is that when the music consists of neutral energy, like relaxed, calm, and bored. Finally, negative is that when the music consists of negative energy, like sad, frustrated, and angry emotion. For the labelling process, we conducted a survey where we assign five people to assess/label each data sample; this was to ensure that we obtained an objective assessment. After we collected all the responses from the respondents, we validated our data with agreement percentage. We will only be using data that have above 50% of agreement score. For that reason, in our final data set, we will be using 536 non-vocal audios where 180 songs are positive, 226 songs are neutral, and 130 songs are negative.

After validating the data from the questionnaire, the data is split into two groups: full-songs and 45-second-clip songs. Full-song means one complete song whereas 45-second-clip song means that the complete song is divided into 45-second clips. After that, the data is converted into spectrograms. A spectrogram is a visual representation of the spectrum of frequencies of various times. By using a spectrogram, the machine can learn a variety of emotions from the song’s spectrum. There are several types of spectrograms; one of them is Mel-spectrogram. Mel-spectrogram is selected because it has been one of the most widespread features from audio analysis tasks like music auto-tagging and latent feature learning. Mel-scale is supported by domain knowledge of the human auditory system [9] and has been empirically proven by impressive performance gains in various tasks [10]. Our program could only process .wav as the input type for the mel-spectrograms converter. Therefore, before we converted our data into Mel-spectrograms, we need to convert songs from .mp3 into .wav. The following parameters are used to build the Mel-spectrograms: 4096 number of samples per time-step in the spectrogram/hop_length, 128 number of bins in the spectrogram/n_mels (height of the image) and 256 number of time-steps/time_steps (width of the image). These Mel-spectrograms are converted into a 128×256 image with grayscale color. This Mel-spectrogram image is then used as the input data to the CNN and CRNN model.

The final dataset used in this experiment is spectrograms from 361 songs where there are 86 negative, 156 neutral, and 119 positive labels. This final data was obtained after removing problematic songs, which are those with ambiguous labels or those generating spectrograms with missing data. Aside from using the full song in the first experiment, the 45-second clips were used in the second one, which made the latter spectrogram dataset became 3095 songs (767 negative, 1308 neutral, and 1020 positive labels).

3.2. Proposed Framework for MER

This research aims to build and evaluate the system of emotion recognition or classification for Indonesian music. CNN and RNN were used to implement the objective. CNN is one of the Feed Forward Neural Network classes inspired by the brain’s visual cortex. CNN is specifically designed to process grid structure data. CNN based architecture is shown in Figure 3.

Figure 3: Convolutional Neural Network Architecture

Figure 3: Convolutional Neural Network Architecture

CNN is useful for analyzing image data [11]. Convolutional-2D and Rectified Linear Unit (ReLu) were used as activation functions.

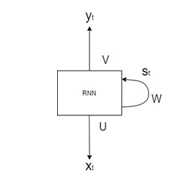

RNN is one of the neural networks that processes the input data for several times. Generally, RNN is used for analyzing sequential data such as Natural Language Processing (NLP) [12], voice recognizing/analyzing [13], etc. Another research claimed that RNN is useful to recognize or predict emotion in music [14]. An RNN-based architecture is shown in Figure 4.

Figure 4: Recurrent Neural Network Architecture

Figure 4: Recurrent Neural Network Architecture

These two deep-learning methods were combined to solve the MER problem described earlier. Convolution-LSTM layer was used as the RNN. CNN-LSTM is a type of recurrent neural network that has a convolutional structure in both the input-to-state and state-to-state transition. Several models were built for comparison purposes, which can be seen in Table 2 and 3.

Table 2: Proposed Model using CNN

| Input (126x258x1) | |

| Convolutional | 16 filters |

| Convolutional | 32 filters |

| Convolutional | 64 filters |

| Convolutional | 112 filters |

| Max-Pooling | 2,2 pool size |

| Flatten | |

| Dense | 128 filters |

| Dense | 128 filters |

| Dense | 3 filters |

A 3x32x3 kernel was used for the CNN layers in the first model. Four layers of CNN and three layers of Fully Connected layer were used in this model, then Re-Lu was applied to the CNN and the dense layers activation. The output layer used SoftMax activation. Adam and Categorical Cross Entropy were used as the optimizer and the entropy for the loss function in this model, respectively. For comparison purposes, CRNN model was also built (Table 3).

Table 3: Proposed Model using CRNN

| Input (126x258x1) | ||

| Convolutional | 16 filters | |

| Convolutional-LSTM | 30 filters | |

| Convolutional | 36 filters | |

| Convolutional-LSTM | 50 filters | |

| Flatten | ||

| Dense | 128 filters | |

| Dense | 128 filters | |

| Dense | 3 filters | |

A 2×3 kernel was used for the CNN and CNN-LSTM layers in this model. Re-Lu was also applied to the CNN, the CNN-LSTM and the dense layers activation. This model used SoftMax as output layer activation. Adam optimizer and Categorical Cross entropy loss function were also used in this model.

4. Result and Discussion

As described earlier, there are 361 songs consisting of 86 negative, 156 neutral, and 119 positive labels as the input dataset to the proposed methods. From 361 spectrogram’s images, 80% was used as the training dataset, 10% as the validation dataset, and 10% as the test dataset. We split the data using the algorithm provided by ImageDataGenerator that is available on Keras, a function named validation split. Before we apply the function, we first calculate the weight of each data manually, comparing the labels on each data sets. Then we combine the training and validation dataset into one folder to be split using the function mentioned. The testing dataset are saved in a separate folder. The experiment was split into two datasets: full-song dataset containing 361 spectrograms and 45-second-clip dataset containing 3095 spectrograms.

4.1. Full-songs Experiment

In this section, the complete version of the music was used, which was the full track. The testing performance of MER using the two proposed models, CNN and CRNN, are presented in Table 4.

Table 4: Summary of Test Result Using Full-Songs Dataset

| Model | Training Accuracy | Validity Accuracy | Test Accuracy |

| CNN | 55.33% | 44.12% | 41.66% |

| CRNN | 75.95% | 52.94% | 58.33% |

From the performance summary shown in Table 4, it can be concluded that the CRNN model outperforms the CNN one for all the training, validity, and test accuracies.

4.2. 45-second-clip Experiment

45-second clip songs were also used in the experiment because 45 seconds are considerably long enough for humans to recognize what emotion appears in a song [3]. Similar to the full-song experiment, CNN and CRNN were also developed to investigate the MER performances. The results are shown in Table 5.

Table 5: Summary of Test Results Using 45-Second-Clip Songs Dataset

| Model | Training Accuracy | Validity Accuracy | Test Accuracy |

| CNN | 43.14% | 43.18% | 38% |

| CRNN | 52.46% | 47.73% | 38% |

4.3. Discussion

We process our data by using the algorithm stated in Algorithm 1.

| Algorithm 1: Audio time-series/y parameters | ||||||

| Result: Audio time-series/y parameters | ||||||

| for data in datas | ||||||

| Load audio from audio’s path; | ||||||

| Define y using librosa library (librosa.load(audio path)); | ||||||

| Define start_sample with zero/0; | ||||||

| Define length-sample with time_steps*hop_length; | ||||||

| Define array of window; | ||||||

| for start_sample in start_sample + length_sample | ||||||

| Add window with y[start_sample]; | ||||||

| start_sample + 1 | ||||||

| End | ||||||

| End | ||||||

| return array of window | ||||||

In Algorithm 1 we capture the array from each song. The more data we capture, the more feature that could be extracted from the audio. This is the results of the multiplication of time_steps and hop_length. The higher the number, the more features that it could cover. With that logic intact we may assume by using a 45-second clip, which is shorter, it could generate better results, because it will cover the whole feature by the spectrogram. With more results obtained by using Algorithm 1, the more sensitive the spectrogram becomes in capturing the feature in the audio that we use. In other words, using the optimal time_step and hop_length could result in a more complete data, and a quick pre-processing time.

However, the results obtained indicate that the full song model performs better than the shorter clip one. We found that the reason is because our assumption for the shorter clip may only work if we wanted to focus on a specific point of the song, where in this case we wanted to know the overall emotion or as we may say the full audio. When using the 45-second clip, the machine could not capture the whole essence of the song, only focusing on that specific part instead. In this case the machine faced the case of ambiguity because within one song there may be a part where it shows a positive emotion but followed with neutral or even negative emotion. If we wanted to use the 45-second model, we might have to add a more complex algorithm to the machine to determine the song emotion. In this case, we could say that it is better to use the full song clip for music emotion recognition where the model would see the major points instead of only a specific point.

While conducting our experiment we also found that when we are using a lot of convolutional layers, the machine tends to generalize most clips with a neutral label and stop learning, also known as overfitting. When we use more than five convolutional layers, a large filter, or less than five layers but with an even greater filter, feature machine tend to decline and stop learning. In this case, we must create a balanced architecture using Table 2. This is not limited to a convolutional layer model, but also in convolutional recurrent neural network that is greater than 3 recurrent layers and 2 convolutional layers. Overfitting will be more likely to happen when there are too many convolutional recurrent layers. This results in the case where the machine tends to generalize the audio with a neutral label. We have tried using dropout layer to overcome overfitting, but the use of dropout layer does not give a significant difference. The difference in kernel size and amount of parameter filter are the one that helps overcome overfitting. Another reason that we found could be because neutral-emotion audios were the dominant label in this experiment. The machine absorbs more information from neutral-emotion datasets. Neutral emotion, in a way, is a bridge between the positive and negative emotion datasets.

5. Conclusion and Future Works

In this work, music emotion recognition using spectrograms with Convolutional Neural Network (CNN) and Convolutional Recurrent Neural Network (CRNN) has been proposed. The data that we use are songs from Indonesia. The spectrograms used 256 time_steps, 128 n-mels, and 4096 hop_length parameters. Two types of experiments have been conducted: one using full-songs and one using 45-second-clip songs. From those experiments, CNN achieved the test accuracy of 41.66% and 38% using full-songs and 45-second-clip songs dataset, respectively; while CRNN achieved the test accuracy of 58.33% and 38% using the same two types of datasets, respectively.

From this experiment, it can be concluded that using a full-song dataset achieves better MER accuracy than using 45-second-clip dataset. However, more experiments need to be conducted to confirm such a finding, such as adding a more complex algorithm to support the 45-second dataset thus the machine could become more precise in predicting the emotion while still maintaining the objectivity of the whole audio instead of only focusing on one part. More data also need to be collected for future works to achieve higher accuracy and use more labelled category or classification. We guess that it is probably due to the ambiguity in one label or ambiguity from this experiment. Thus, we think it will be more accurate if we use more labels for our future works. To fix overfitting we plan to use an ensemble method in our future research. Moreover, it would be interesting to include the songs’ lyrics as additional features to develop a multimodal music emotion recognition system.

Conflicts of Interest

The authors declare no conflict of interest.

Acknowledgement

The author would like to thank everyone who helped with this research, and Bina Nusantara University for supporting the author in completing this paper.

- C. Pêcher, C. Lemercier, J.M. Cellier, “The influence of emotions on driving behavior,” Traffic Psychology: An International Perspective, (January), 145–158, 2011.

- J.A. Russell, “A circumplex model of affect,” Journal of Personality and Social Psychology, 39(6), 1161–1178, 1980, doi:10.1037/h0077714.

- T. Liu, L. Han, L. Ma, D. Guo, “Audio-based deep music emotion recognition,” AIP Conference Proceedings, 1967(May 2018), 2018, doi:10.1063/1.5039095.

- A. Bhattacharya, K. V. Kadambari, “A Multimodal Approach towards Emotion Recognition of Music using Audio and Lyrical Content,” 2018.

- H. Liu, Y. Fang, Q. Huang, “Music Emotion Recognition Using a Variant of Recurrent Neural Network,” 164(Mmssa 2018), 15–18, 2019, doi:10.2991/mmssa-18.2019.4.

- X. Liu, Q. Chen, X. Wu, Y. Liu, Y. Liu, “CNN based music emotion classification,” 2017.

- B. Jeo, C. Kim, A. Kim, D. Kim, J. Park, J.-W. Ha, “Music Emotion Recognition via End-to-End Multimodal Neural Networks,” ICASSP, IEEE International Conference on Acoustics, Speech and Signal Processing – Proceedings, 2, 2017.

- Y.H. Yang, H.H. Chen, “Machine recognition of music emotion: A review,” ACM Transactions on Intelligent Systems and Technology, 3(3), 2012, doi:10.1145/2168752.2168754.

- T. Bertin-Mahieux, D.P.W. Ellis, B. Whitman, P. Lamere, “The million song dataset,” Proceedings of the 12th International Society for Music Information Retrieval Conference, ISMIR 2011, (Ismir), 591–596, 2011.

- R. Akella, T.S. Moh, “Mood classification with lyrics and convnets,” Proceedings – 18th IEEE International Conference on Machine Learning and Applications, ICMLA 2019, 511–514, 2019, doi:10.1109/ICMLA.2019.00095.

- Y. Lecun, Y. Bengio, “Convolutional Networks for Images , Speech , and Time Series Variable-Size Convolutional Networks: SDNNs,” Processing, 2010, doi:10.1109/IJCNN.2004.1381049.

- A. Hassan, “SENTIMENT ANALYSIS WITH RECURRENT NEURAL NETWORK AND UNSUPERVISED Ph . D . Candidate: Abdalraouf Hassan , Advisor: Ausif Mahmood Dep of Computer Science and Engineering , University of Bridgeport , CT , 06604 , USA,” (March), 2–4, 2017.

- A. Amberkar, P. Awasarmol, G. Deshmukh, P. Dave, “Speech Recognition using Recurrent Neural Networks,” Proceedings of the 2018 International Conference on Current Trends towards Converging Technologies, ICCTCT 2018, (June), 1–4, 2018, doi:10.1109/ICCTCT.2018.8551185.

- M. Xu, X. Li, H. Xianyu, J. Tian, F. Meng, W. Chen, “Multi-scale Approaches to the MediaEval 2015 “ Emotion in Music ” Task,” 5–7, 2015.

- Kohinur Parvin, Eshat Ahmad Shuvo, Wali Ashraf Khan, Sakibul Alam Adib, Tahmina Akter Eiti, Mohammad Shovon, Shoeb Akter Nafiz, "Computationally Efficient Explainable AI Framework for Skin Cancer Detection", Advances in Science, Technology and Engineering Systems Journal, vol. 11, no. 1, pp. 11–24, 2026. doi: 10.25046/aj110102

- Jenna Snead, Nisa Soltani, Mia Wang, Joe Carson, Bailey Williamson, Kevin Gainey, Stanley McAfee, Qian Zhang, "3D Facial Feature Tracking with Multimodal Depth Fusion", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 5, pp. 11–19, 2025. doi: 10.25046/aj100502

- François Dieudonné Mengue, Verlaine Rostand Nwokam, Alain Soup Tewa Kammogne, René Yamapi, Moskolai Ngossaha Justin, Bowong Tsakou Samuel, Bernard Kamsu Fogue, "Explainable AI and Active Learning for Photovoltaic System Fault Detection: A Bibliometric Study and Future Directions", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 3, pp. 29–44, 2025. doi: 10.25046/aj100305

- Surapol Vorapatratorn, Nontawat Thongsibsong, "AI-Based Photography Assessment System using Convolutional Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 10, no. 2, pp. 28–34, 2025. doi: 10.25046/aj100203

- Win Pa Pa San, Myo Khaing, "Advanced Fall Analysis for Elderly Monitoring Using Feature Fusion and CNN-LSTM: A Multi-Camera Approach", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 6, pp. 12–20, 2024. doi: 10.25046/aj090602

- Joshua Carberry, Haiping Xu, "GPT-Enhanced Hierarchical Deep Learning Model for Automated ICD Coding", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 4, pp. 21–34, 2024. doi: 10.25046/aj090404

- Nguyen Viet Hung, Tran Thanh Lam, Tran Thanh Binh, Alan Marshal, Truong Thu Huong, "Efficient Deep Learning-Based Viewport Estimation for 360-Degree Video Streaming", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 49–61, 2024. doi: 10.25046/aj090305

- Sami Florent Palm, Sianou Ezéckie Houénafa, Zourkalaïni Boubakar, Sebastian Waita, Thomas Nyachoti Nyangonda, Ahmed Chebak, "Solar Photovoltaic Power Output Forecasting using Deep Learning Models: A Case Study of Zagtouli PV Power Plant", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 41–48, 2024. doi: 10.25046/aj090304

- Henry Toal, Michelle Wilber, Getu Hailu, Arghya Kusum Das, "Evaluation of Various Deep Learning Models for Short-Term Solar Forecasting in the Arctic using a Distributed Sensor Network", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 3, pp. 12–28, 2024. doi: 10.25046/aj090302

- Toya Acharya, Annamalai Annamalai, Mohamed F Chouikha, "Enhancing the Network Anomaly Detection using CNN-Bidirectional LSTM Hybrid Model and Sampling Strategies for Imbalanced Network Traffic Data", Advances in Science, Technology and Engineering Systems Journal, vol. 9, no. 1, pp. 67–78, 2024. doi: 10.25046/aj090107

- Toya Acharya, Annamalai Annamalai, Mohamed F Chouikha, "Optimizing the Performance of Network Anomaly Detection Using Bidirectional Long Short-Term Memory (Bi-LSTM) and Over-sampling for Imbalance Network Traffic Data", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 6, pp. 144–154, 2023. doi: 10.25046/aj080614

- Nizar Sakli, Chokri Baccouch, Hedia Bellali, Ahmed Zouinkhi, Mustapha Najjari, "IoT System and Deep Learning Model to Predict Cardiovascular Disease Based on ECG Signal", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 6, pp. 08–18, 2023. doi: 10.25046/aj080602

- Zobeda Hatif Naji Al-azzwi, Alexey N. Nazarov, "MRI Semantic Segmentation based on Optimize V-net with 2D Attention", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 4, pp. 73–80, 2023. doi: 10.25046/aj080409

- Kohei Okawa, Felix Jimenez, Shuichi Akizuki, Tomohiro Yoshikawa, "Investigating the Impression Effects of a Teacher-Type Robot Equipped a Perplexion Estimation Method on College Students", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 4, pp. 28–35, 2023. doi: 10.25046/aj080404

- Yu-Jin An, Ha-Young Oh, Hyun-Jong Kim, "Forecasting Bitcoin Prices: An LSTM Deep-Learning Approach Using On-Chain Data", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 3, pp. 186–192, 2023. doi: 10.25046/aj080321

- Mario Cuomo, Federica Massimi, Francesco Benedetto, "Detecting CTC Attack in IoMT Communications using Deep Learning Approach", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 2, pp. 130–138, 2023. doi: 10.25046/aj080215

- Ivana Marin, Sven Gotovac, Vladan Papić, "Development and Analysis of Models for Detection of Olive Trees", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 2, pp. 87–96, 2023. doi: 10.25046/aj080210

- Víctor Manuel Bátiz Beltrán, Ramón Zatarain Cabada, María Lucía Barrón Estrada, Héctor Manuel Cárdenas López, Hugo Jair Escalante, "A Multiplatform Application for Automatic Recognition of Personality Traits in Learning Environments", Advances in Science, Technology and Engineering Systems Journal, vol. 8, no. 2, pp. 30–37, 2023. doi: 10.25046/aj080204

- Anh-Thu Mai, Duc-Huy Nguyen, Thanh-Tin Dang, "Transfer and Ensemble Learning in Real-time Accurate Age and Age-group Estimation", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 6, pp. 262–268, 2022. doi: 10.25046/aj070630

- Fatima-Zahra Elbouni, Aziza EL Ouaazizi, "Birds Images Prediction with Watson Visual Recognition Services from IBM-Cloud and Conventional Neural Network", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 6, pp. 181–188, 2022. doi: 10.25046/aj070619

- Bahram Ismailov Israfil, "Deep Learning in Monitoring the Behavior of Complex Technical Systems", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 5, pp. 10–16, 2022. doi: 10.25046/aj070502

- Nosiri Onyebuchi Chikezie, Umanah Cyril Femi, Okechukwu Olivia Ozioma, Ajayi Emmanuel Oluwatomisin, Akwiwu-Uzoma Chukwuebuka, Njoku Elvis Onyekachi, Gbenga Christopher Kalejaiye, "BER Performance Evaluation Using Deep Learning Algorithm for Joint Source Channel Coding in Wireless Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 4, pp. 127–139, 2022. doi: 10.25046/aj070417

- Tiny du Toit, Hennie Kruger, Lynette Drevin, Nicolaas Maree, "Deep Learning Affective Computing to Elicit Sentiment Towards Information Security Policies", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 3, pp. 152–160, 2022. doi: 10.25046/aj070317

- Kaito Echizenya, Kazuhiro Kondo, "Indoor Position and Movement Direction Estimation System Using DNN on BLE Beacon RSSI Fingerprints", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 3, pp. 129–138, 2022. doi: 10.25046/aj070315

- Jayan Kant Duggal, Mohamed El-Sharkawy, "High Performance SqueezeNext: Real time deployment on Bluebox 2.0 by NXP", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 3, pp. 70–81, 2022. doi: 10.25046/aj070308

- Sreela Sreekumaran Pillai Remadevi Amma, Sumam Mary Idicula, "A Unified Visual Saliency Model for Automatic Image Description Generation for General and Medical Images", Advances in Science, Technology and Engineering Systems Journal, vol. 7, no. 2, pp. 119–126, 2022. doi: 10.25046/aj070211

- Seok-Jun Bu, Hae-Jung Kim, "Ensemble Learning of Deep URL Features based on Convolutional Neural Network for Phishing Attack Detection", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 291–296, 2021. doi: 10.25046/aj060532

- Osaretin Eboya, Julia Binti Juremi, "iDRP Framework: An Intelligent Malware Exploration Framework for Big Data and Internet of Things (IoT) Ecosystem", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 185–202, 2021. doi: 10.25046/aj060521

- Baida Ouafae, Louzar Oumaima, Ramdi Mariam, Lyhyaoui Abdelouahid, "Survey on Novelty Detection using Machine Learning Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 73–82, 2021. doi: 10.25046/aj060510

- Fatima-Ezzahra Lagrari, Youssfi Elkettani, "Traditional and Deep Learning Approaches for Sentiment Analysis: A Survey", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 5, pp. 01–07, 2021. doi: 10.25046/aj060501

- Anjali Banga, Pradeep Kumar Bhatia, "Optimized Component based Selection using LSTM Model by Integrating Hybrid MVO-PSO Soft Computing Technique", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 4, pp. 62–71, 2021. doi: 10.25046/aj060408

- Nadia Jmour, Slim Masmoudi, Afef Abdelkrim, "A New Video Based Emotions Analysis System (VEMOS): An Efficient Solution Compared to iMotions Affectiva Analysis Software", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 990–1001, 2021. doi: 10.25046/aj0602114

- Bakhtyar Ahmed Mohammed, Muzhir Shaban Al-Ani, "Follow-up and Diagnose COVID-19 Using Deep Learning Technique", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 971–976, 2021. doi: 10.25046/aj0602111

- Showkat Ahmad Dar, S Palanivel, "Performance Evaluation of Convolutional Neural Networks (CNNs) And VGG on Real Time Face Recognition System", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 956–964, 2021. doi: 10.25046/aj0602109

- Kenza Aitelkadi, Hicham Outmghoust, Salahddine laarab, Kaltoum Moumayiz, Imane Sebari, "Detection and Counting of Fruit Trees from RGB UAV Images by Convolutional Neural Networks Approach", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 887–893, 2021. doi: 10.25046/aj0602101

- Binghan Li, Yindong Hua, Mi Lu, "Advanced Multiple Linear Regression Based Dark Channel Prior Applied on Dehazing Image and Generating Synthetic Haze", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 790–800, 2021. doi: 10.25046/aj060291

- Shahnaj Parvin, Liton Jude Rozario, Md. Ezharul Islam, "Vehicle Number Plate Detection and Recognition Techniques: A Review", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 2, pp. 423–438, 2021. doi: 10.25046/aj060249

- Prasham Shah, Mohamed El-Sharkawy, "A-MnasNet and Image Classification on NXP Bluebox 2.0", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 1378–1383, 2021. doi: 10.25046/aj0601157

- Byeongwoo Kim, Jongkyu Lee, "Fault Diagnosis and Noise Robustness Comparison of Rotating Machinery using CWT and CNN", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 1279–1285, 2021. doi: 10.25046/aj0601146

- Alisson Steffens Henrique, Anita Maria da Rocha Fernandes, Rodrigo Lyra, Valderi Reis Quietinho Leithardt, Sérgio D. Correia, Paul Crocker, Rudimar Luis Scaranto Dazzi, "Classifying Garments from Fashion-MNIST Dataset Through CNNs", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 989–994, 2021. doi: 10.25046/aj0601109

- Reem Bayari, Ameur Bensefia, "Text Mining Techniques for Cyberbullying Detection: State of the Art", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 783–790, 2021. doi: 10.25046/aj060187

- Imane Jebli, Fatima-Zahra Belouadha, Mohammed Issam Kabbaj, Amine Tilioua, "Deep Learning based Models for Solar Energy Prediction", Advances in Science, Technology and Engineering Systems Journal, vol. 6, no. 1, pp. 349–355, 2021. doi: 10.25046/aj060140

- Xianxian Luo, Songya Xu, Hong Yan, "Application of Deep Belief Network in Forest Type Identification using Hyperspectral Data", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 1554–1559, 2020. doi: 10.25046/aj0506186

- Majdouline Meddad, Chouaib Moujahdi, Mounia Mikram, Mohammed Rziza, "Optimization of Multi-user Face Identification Systems in Big Data Environments", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 762–767, 2020. doi: 10.25046/aj050691

- Kin Yun Lum, Yeh Huann Goh, Yi Bin Lee, "American Sign Language Recognition Based on MobileNetV2", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 481–488, 2020. doi: 10.25046/aj050657

- Gede Putra Kusuma, Jonathan, Andreas Pangestu Lim, "Emotion Recognition on FER-2013 Face Images Using Fine-Tuned VGG-16", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 315–322, 2020. doi: 10.25046/aj050638

- Lubna Abdelkareim Gabralla, "Dense Deep Neural Network Architecture for Keystroke Dynamics Authentication in Mobile Phone", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 307–314, 2020. doi: 10.25046/aj050637

- Helen Kottarathil Joy, Manjunath Ramachandra Kounte, "A Comprehensive Review of Traditional Video Processing", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 274–279, 2020. doi: 10.25046/aj050633

- Kailerk Treetipsounthorn, Thanisorn Sriudomporn, Gridsada Phanomchoeng, Christian Dengler, Setha Panngum, Sunhapos Chantranuwathana, Ali Zemouche, "Vehicle Rollover Detection in Tripped and Untripped Rollovers using Recurrent Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 228–238, 2020. doi: 10.25046/aj050627

- Andrea Generosi, Silvia Ceccacci, Samuele Faggiano, Luca Giraldi, Maura Mengoni, "A Toolkit for the Automatic Analysis of Human Behavior in HCI Applications in the Wild", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 185–192, 2020. doi: 10.25046/aj050622

- Pamela Zontone, Antonio Affanni, Riccardo Bernardini, Leonida Del Linz, Alessandro Piras, Roberto Rinaldo, "Supervised Learning Techniques for Stress Detection in Car Drivers", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 6, pp. 22–29, 2020. doi: 10.25046/aj050603

- Sherif H. ElGohary, Aya Lithy, Shefaa Khamis, Aya Ali, Aya Alaa el-din, Hager Abd El-Azim, "Interactive Virtual Rehabilitation for Aphasic Arabic-Speaking Patients", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 1225–1232, 2020. doi: 10.25046/aj0505148

- Daniyar Nurseitov, Kairat Bostanbekov, Maksat Kanatov, Anel Alimova, Abdelrahman Abdallah, Galymzhan Abdimanap, "Classification of Handwritten Names of Cities and Handwritten Text Recognition using Various Deep Learning Models", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 934–943, 2020. doi: 10.25046/aj0505114

- Khalid A. AlAfandy, Hicham Omara, Mohamed Lazaar, Mohammed Al Achhab, "Using Classic Networks for Classifying Remote Sensing Images: Comparative Study", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 770–780, 2020. doi: 10.25046/aj050594

- Khalid A. AlAfandy, Hicham, Mohamed Lazaar, Mohammed Al Achhab, "Investment of Classic Deep CNNs and SVM for Classifying Remote Sensing Images", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 652–659, 2020. doi: 10.25046/aj050580

- Lana Abdulrazaq Abdullah, Muzhir Shaban Al-Ani, "CNN-LSTM Based Model for ECG Arrhythmias and Myocardial Infarction Classification", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 601–606, 2020. doi: 10.25046/aj050573

- Chigozie Enyinna Nwankpa, "Advances in Optimisation Algorithms and Techniques for Deep Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 563–577, 2020. doi: 10.25046/aj050570

- Sathyabama Kaliyapillai, Saruladha Krishnamurthy, "Differential Evolution based Hyperparameters Tuned Deep Learning Models for Disease Diagnosis and Classification", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 253–261, 2020. doi: 10.25046/aj050531

- Hicham Moujahid, Bouchaib Cherradi, Oussama El Gannour, Lhoussain Bahatti, Oumaima Terrada, Soufiane Hamida, "Convolutional Neural Network Based Classification of Patients with Pneumonia using X-ray Lung Images", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 167–175, 2020. doi: 10.25046/aj050522

- Mohsine Elkhayati, Youssfi Elkettani, "Towards Directing Convolutional Neural Networks Using Computational Geometry Algorithms: Application to Handwritten Arabic Character Recognition", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 5, pp. 137–147, 2020. doi: 10.25046/aj050519

- Nghia Duong-Trung, Luyl-Da Quach, Chi-Ngon Nguyen, "Towards Classification of Shrimp Diseases Using Transferred Convolutional Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 724–732, 2020. doi: 10.25046/aj050486

- Tran Thanh Dien, Nguyen Thanh-Hai, Nguyen Thai-Nghe, "Deep Learning Approach for Automatic Topic Classification in an Online Submission System", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 700–709, 2020. doi: 10.25046/aj050483

- Adonis Santos, Patricia Angela Abu, Carlos Oppus, Rosula Reyes, "Real-Time Traffic Sign Detection and Recognition System for Assistive Driving", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 600–611, 2020. doi: 10.25046/aj050471

- Maroua Abdellaoui, Dounia Daghouj, Mohammed Fattah, Younes Balboul, Said Mazer, Moulhime El Bekkali, "Artificial Intelligence Approach for Target Classification: A State of the Art", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 445–456, 2020. doi: 10.25046/aj050453

- Roberta Avanzato, Francesco Beritelli, "A CNN-based Differential Image Processing Approach for Rainfall Classification", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 438–444, 2020. doi: 10.25046/aj050452

- Samir Allach, Mohamed Ben Ahmed, Anouar Abdelhakim Boudhir, "Deep Learning Model for A Driver Assistance System to Increase Visibility on A Foggy Road", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 314–322, 2020. doi: 10.25046/aj050437

- Rizki Jaka Maulana, Gede Putra Kusuma, "Malware Classification Based on System Call Sequences Using Deep Learning", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 207–216, 2020. doi: 10.25046/aj050426

- Van-Hung Le, Hung-Cuong Nguyen, "A Survey on 3D Hand Skeleton and Pose Estimation by Convolutional Neural Network", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 4, pp. 144–159, 2020. doi: 10.25046/aj050418

- Hai Thanh Nguyen, Nhi Yen Kim Phan, Huong Hoang Luong, Trung Phuoc Le, Nghi Cong Tran, "Efficient Discretization Approaches for Machine Learning Techniques to Improve Disease Classification on Gut Microbiome Composition Data", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 547–556, 2020. doi: 10.25046/aj050368

- Neptali Montañez, Jomari Joseph Barrera, "Automated Abaca Fiber Grade Classification Using Convolution Neural Network (CNN)", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 3, pp. 207–213, 2020. doi: 10.25046/aj050327

- Aditi Haresh Vyas, Mayuri A. Mehta, "A Comprehensive Survey on Image Modality Based Computerized Dry Eye Disease Detection Techniques", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 748–756, 2020. doi: 10.25046/aj050293

- Yeji Shin, Youngone Cho, Hyun Wook Kang, Jin-Gu Kang, Jin-Woo Jung, "Neural Network-based Efficient Measurement Method on Upside Down Orientation of a Digital Document", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 697–702, 2020. doi: 10.25046/aj050286

- Ola Surakhi, Sami Serhan, Imad Salah, "On the Ensemble of Recurrent Neural Network for Air Pollution Forecasting: Issues and Challenges", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 512–526, 2020. doi: 10.25046/aj050265

- Bokyoon Na, Geoffrey C Fox, "Object Classifications by Image Super-Resolution Preprocessing for Convolutional Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 476–483, 2020. doi: 10.25046/aj050261

- Bayan O Al-Amri, Mohammed A. AlZain, Jehad Al-Amri, Mohammed Baz, Mehedi Masud, "A Comprehensive Study of Privacy Preserving Techniques in Cloud Computing Environment", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 419–424, 2020. doi: 10.25046/aj050254

- Lenin G. Falconi, Maria Perez, Wilbert G. Aguilar, Aura Conci, "Transfer Learning and Fine Tuning in Breast Mammogram Abnormalities Classification on CBIS-DDSM Database", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 2, pp. 154–165, 2020. doi: 10.25046/aj050220

- Daihui Li, Chengxu Ma, Shangyou Zeng, "Design of Efficient Convolutional Neural Module Based on An Improved Module", Advances in Science, Technology and Engineering Systems Journal, vol. 5, no. 1, pp. 340–345, 2020. doi: 10.25046/aj050143

- Andary Dadang Yuliyono, Abba Suganda Girsang, "Artificial Bee Colony-Optimized LSTM for Bitcoin Price Prediction", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 375–383, 2019. doi: 10.25046/aj040549

- Shun Yamamoto, Momoyo Ito, Shin-ichi Ito, Minoru Fukumi, "Verification of the Usefulness of Personal Authentication with Aerial Input Numerals Using Leap Motion", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 369–374, 2019. doi: 10.25046/aj040548

- Kevin Yudi, Suharjito, "Sentiment Analysis of Transjakarta Based on Twitter using Convolutional Neural Network", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 281–286, 2021. doi: 10.25046/aj040535

- Bui Thanh Hung, "Integrating Diacritics Restoration and Question Classification into Vietnamese Question Answering System", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 5, pp. 207–212, 2019. doi: 10.25046/aj040526

- Priyamvada Chandel, Tripta Thakur, "Smart Meter Data Analysis for Electricity Theft Detection using Neural Networks", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 4, pp. 161–168, 2019. doi: 10.25046/aj040420

- Ajees Arimbassery Pareed, Sumam Mary Idicula, "A Relation Extraction System for Indian Languages", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 2, pp. 65–69, 2019. doi: 10.25046/aj040208

- Bok Gyu Han, Hyeon Seok Yang, Ho Gyeong Lee, Young Shik Moon, "Low Contrast Image Enhancement Using Convolutional Neural Network with Simple Reflection Model", Advances in Science, Technology and Engineering Systems Journal, vol. 4, no. 1, pp. 159–164, 2019. doi: 10.25046/aj040115

- An-Ting Cheng, Chun-Yen Chen, Bo-Cheng Lai, Che-Huai Lin, "Software and Hardware Enhancement of Convolutional Neural Networks on GPGPUs", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 2, pp. 28–39, 2018. doi: 10.25046/aj030204

- Marwa Farouk Ibrahim Ibrahim, Adel Ali Al-Jumaily, "Auto-Encoder based Deep Learning for Surface Electromyography Signal Processing", Advances in Science, Technology and Engineering Systems Journal, vol. 3, no. 1, pp. 94–102, 2018. doi: 10.25046/aj030111

- Myoung-Wan Koo, Guanghao Xu, Hyunjung Lee, Jungyun Seo, "Retrieving Dialogue History in Deep Neural Networks for Spoken Language Understanding", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 3, pp. 1741–1747, 2017. doi: 10.25046/aj0203213

- Marwa Farouk Ibrahim Ibrahim, Adel Ali Al-Jumaily, "Self-Organizing Map based Feature Learning in Bio-Signal Processing", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 3, pp. 505–512, 2017. doi: 10.25046/aj020365

- R. Manju Parkavi, M. Shanthi, M.C. Bhuvaneshwari, "Recent Trends in ELM and MLELM: A review", Advances in Science, Technology and Engineering Systems Journal, vol. 2, no. 1, pp. 69–75, 2017. doi: 10.25046/aj020108